Traditional and digital assessment formats shape how educators, employers, and training teams measure learning, skill, and readiness, and choosing between them affects validity, efficiency, accessibility, and the quality of decisions made from results. In assessment design, a format is the delivery and response structure used to collect evidence, such as paper-based multiple-choice tests, oral exams, essays, simulations, online quizzes, adaptive tests, e-portfolios, or remote proctored exams. I have worked with schools, certification providers, and workplace learning teams that used both paper booklets and cloud testing platforms, and the most important lesson is simple: format is never neutral. It changes what can be measured, how consistently evidence is captured, what accommodations are practical, and how fast feedback reaches learners and decision-makers.

Assessment formats matter because they influence core measurement qualities. Reliability refers to consistency of results; validity asks whether the assessment measures the intended knowledge or skill; fairness considers whether test takers have equitable opportunity to demonstrate competence; security concerns exposure, impersonation, and unauthorized aids; usability affects completion rates and administrative burden. Traditional formats often feel familiar and stable, especially in regulated contexts where printed materials, invigilated rooms, and handwritten responses fit long-standing procedures. Digital formats expand what is possible by adding multimedia prompts, automated scoring, item banks, scheduling flexibility, and analytics that paper systems cannot generate at scale.

This hub article covers assessment formats comprehensively by comparing traditional and digital options, explaining where each works best, and outlining the design choices that determine quality. If you are building classroom exams, professional certification tests, hiring assessments, or internal training checks, the question is not whether one format is universally better. The right decision depends on purpose, construct, stakes, audience, infrastructure, and governance. A reading comprehension test for nine-year-olds, a nursing OSCE station, and a cybersecurity lab challenge should not be designed with the same format assumptions. Understanding the strengths and limits of each format is the foundation for better assessment design and development.

What counts as a traditional or digital assessment format

Traditional assessment formats typically include paper-based selected-response tests, short-answer and essay exams written by hand, oral questioning in person, practical demonstrations observed live, and portfolio submissions assembled physically. These formats are often associated with scheduled sessions in controlled rooms, manual invigilation, and human scoring or moderation. They remain common in K-12 classrooms, university finals, civil service testing, and trade evaluations where physical performance must be seen directly. Traditional does not mean outdated. A well-designed paper test can produce defensible results, particularly when content is stable, access to devices is uneven, and the construct does not require interactivity.

Digital assessment formats include online quizzes in learning management systems, computer-based testing at test centers, mobile-delivered checks, multimedia item types, simulations, game-based tasks, e-portfolios, remote proctored exams, and computer-adaptive testing. Platforms such as Moodle, Canvas, Questionmark, Inspera, and Pearson VUE environments support item delivery, timing, scoring, accommodation settings, and reporting. Digital formats range from simple multiple-choice quizzes to complex virtual labs that log process data such as click paths, time on task, and error patterns. That process data can strengthen diagnostic insight, but only if it is interpreted carefully and aligned with the intended construct.

The practical distinction is not paper versus screen alone. The deeper difference is the evidence model. Traditional formats usually collect end products: the marked answer sheet, the handwritten essay, the observed performance. Digital formats can collect both products and process traces. For example, a mathematics platform can record whether a learner used hints, revised intermediate steps, or ran out of time after repeated misreads. Those details can support feedback and item review, but they also raise privacy, data retention, and interpretation questions that assessment teams must manage explicitly.

Strengths of traditional assessment formats

Traditional formats offer three advantages that are still hard to dismiss: familiarity, procedural simplicity, and resilience when technology fails. In many settings, paper exams reduce test anxiety because candidates know what to expect. Handwritten essays and supervised in-person oral exams can feel more authentic to faculty and assessors who are accustomed to judging reasoning, communication, and professional demeanor directly. For organizations without mature digital infrastructure, printing secure papers and using trained invigilators may be operationally safer than launching a new platform during a high-stakes cycle.

Paper-based delivery can also support fairness where device quality, connectivity, or digital literacy vary widely. I have seen otherwise capable candidates underperform in online pilots because they were unfamiliar with scrolling exhibits, split-screen views, or drag-and-drop interactions, even though the construct being tested was not digital navigation. In such cases, the format introduced irrelevant difficulty. Traditional practical assessments also remain essential for certain constructs. A culinary skills test, welding inspection, dance performance, or patient interaction in a clinical station often requires direct observation of physical technique, timing, safety behavior, and interpersonal judgment in real space.

Another benefit is transparency in review and appeals. Physical scripts can be double-marked, annotated, and archived in straightforward ways. External moderators can compare handwriting, examiner notes, and rubric application without relying on proprietary system logs. That does not make traditional formats inherently more secure or valid, but it does make their chain of evidence more visible to some stakeholders. In regulated environments where public trust matters, visible procedures can be as important as technical sophistication.

Strengths of digital assessment formats

Digital assessment formats excel when scale, speed, and richer evidence are required. Automated scoring of selected-response items cuts turnaround time from days to minutes. Large item banks allow multiple equivalent forms, reducing overexposure. Randomization, linear-on-the-fly assembly, and adaptive routing can improve security and precision. Accessibility settings such as zoom, color contrast, keyboard navigation, screen reader compatibility, and extended time can be applied more consistently than in ad hoc paper processes. For distributed workforces and online programs, digital delivery also reduces venue costs and scheduling bottlenecks.

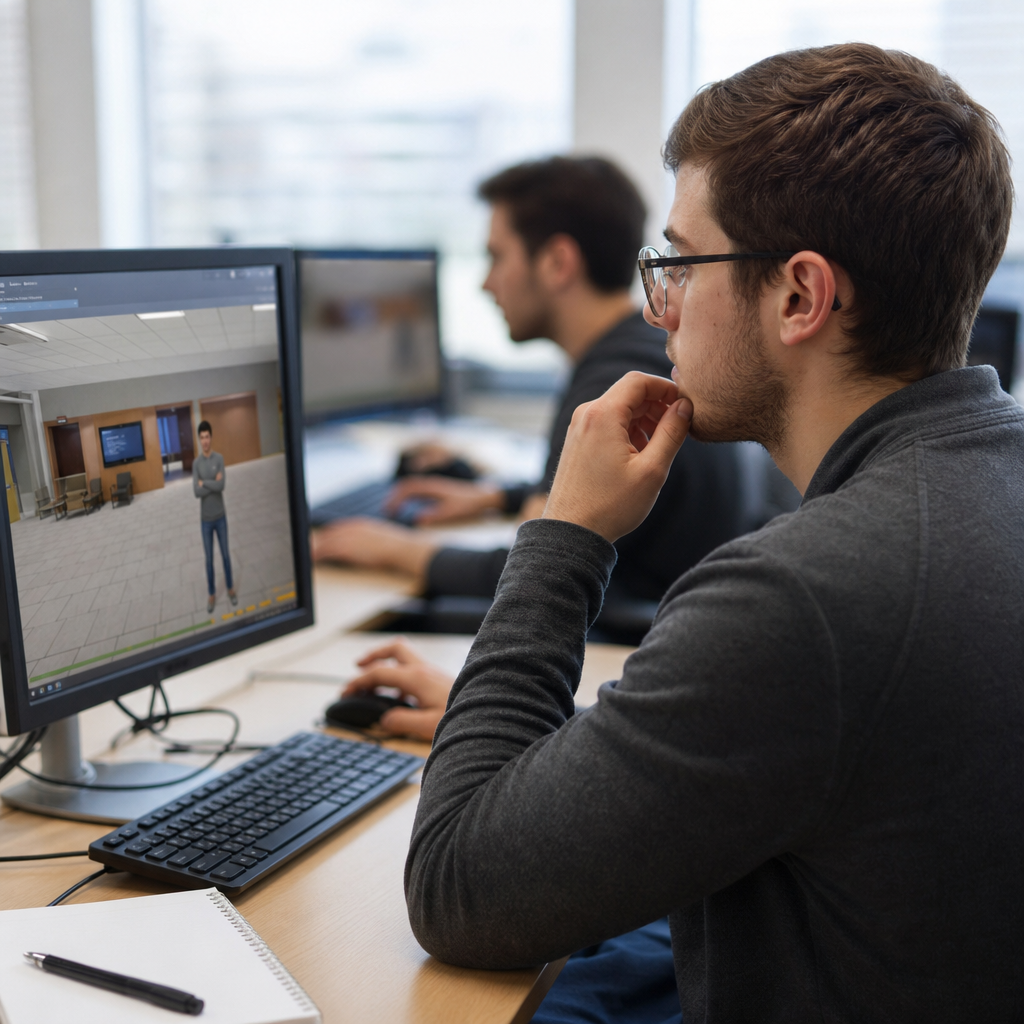

The strongest case for digital formats is not convenience alone; it is improved alignment between task and construct. If you want to assess data interpretation, use interactive dashboards. If you want to assess software troubleshooting, present a simulated interface. If you want to assess spoken language, capture audio responses for standardized rating and moderation. Modern platforms can embed video, branching scenarios, and virtual labs that approximate job-relevant decisions more closely than static prompts. In corporate compliance training, for instance, scenario-based digital assessments often reveal whether employees can apply policy, not just recall definitions.

Digital systems also support continuous improvement. Item statistics such as difficulty index, discrimination, distractor performance, omission rates, and differential performance by subgroup can be reviewed quickly after administration. Psychometric analysis that once required manual data preparation is now built into many platforms or exported to tools such as R, Winsteps, and jMetrik. That means weak items can be flagged earlier, blueprints can be monitored more accurately, and pass-score decisions can be informed by stronger evidence than a stack of answer sheets reviewed weeks later.

Key tradeoffs: validity, reliability, fairness, security, and cost

No assessment format wins on every criterion. The central design task is balancing tradeoffs against purpose and stakes. A useful way to compare formats is to examine the five decision dimensions most teams confront.

| Dimension | Traditional formats | Digital formats |

|---|---|---|

| Validity | Strong for essays, oral exams, and direct observation when trained raters use clear rubrics | Strong for simulations, multimedia tasks, and adaptive testing when technology matches the construct |

| Reliability | Can vary with human marking and administration conditions | High for automated scoring and standardized delivery; variable for poorly calibrated tech-enabled tasks |

| Fairness | Helps where digital access is unequal; accommodations may be slower to implement consistently | Supports scalable accommodations, but can introduce device, bandwidth, or interface bias |

| Security | Risk of paper leakage, impersonation, and inconsistent invigilation | Risk of item harvesting, remote cheating, and privacy concerns from proctoring technologies |

| Cost | Printing, shipping, storage, and manual scoring increase recurring costs | Platform and setup costs are higher upfront, but marginal delivery and reporting costs are often lower |

Validity should lead the decision. If a digital interface changes performance because candidates must master navigation rather than the target skill, validity drops. If a paper test cannot represent the task realistically, validity also drops. Reliability depends on scoring consistency and administration control. Fairness depends on access, language load, accommodations, and bias review. Security must be proportionate to stakes; a weekly low-stakes quiz does not need the same controls as licensure testing. Cost should be viewed across the full lifecycle, including authoring, training, delivery, scoring, reporting, appeals, and maintenance.

Choosing the right assessment format for the purpose

The best way to select an assessment format is to start with the intended use of results. Diagnostic assessments need fast, fine-grained feedback, so digital quizzes with item-level reporting often outperform paper tests. Formative assessments benefit from low friction, immediate feedback, and repeated use, making digital delivery especially effective in classrooms and learning platforms. Summative assessments, especially high-stakes ones, need stronger controls, documented standardization, and defensible scoring, which can be achieved in either format if governance is sound. Performance assessments require realistic tasks and trained raters, whether evidence is collected live, on video, or through simulation.

Audience characteristics matter just as much. Younger learners may need simpler interfaces or teacher-mediated administration. Adult professionals may accept remote digital testing if the workflow is clear and the platform is stable. Candidates in low-bandwidth regions may need downloadable secure browsers, local test centers, or paper fallback plans. Accessibility should be designed in from the start using standards such as WCAG and compatibility testing with assistive technologies. A format that works for most users but excludes a critical group is not well designed, no matter how efficient it appears operationally.

Governance is the final filter. High-stakes programs should document blueprint alignment, standard setting, administration rules, accommodation procedures, incident response, and score interpretation. In my experience, format failures rarely come from the format alone. They come from weak implementation: unclear rubrics, untested devices, insufficient item review, poor staff training, or unrealistic assumptions about user behavior. Good assessment design and development treats format as one component in a larger evidence system.

How this hub connects the broader assessment formats topic

Assessment formats is a broad subtopic, and this hub should anchor more focused articles that go deeper into individual choices. Useful supporting pages include guides to multiple-choice item design, essay and constructed-response scoring, oral assessments, practical performance tasks, online proctoring, computer-adaptive testing, simulation-based assessment, portfolio assessment, accessibility in digital testing, and psychometric quality assurance. Each of those areas deserves dedicated treatment because the design rules differ. For example, writing plausible distractors for selected-response items is a different craft from building a rubric for an observed performance.

The central takeaway across those pages is consistency between purpose, evidence, and consequence. Good assessment formats produce interpretable evidence that supports the decision being made. If the decision is promotion, certification, placement, remediation, or hiring, the format must be robust enough for that use. If the decision is simply whether a learner understood this week’s lesson, the format can be lighter and faster. Hub pages work best when they help readers navigate these distinctions clearly. Use this article as the starting point, then explore the format-specific guidance most relevant to your context.

Traditional and digital assessment formats are not rivals in a winner-takes-all contest; they are tools with different strengths, limitations, and implementation demands. Traditional formats remain valuable for direct observation, familiar administration, and contexts where technology access is uneven or procedural visibility matters. Digital formats provide faster scoring, richer task types, stronger analytics, and scalable delivery when infrastructure and governance are in place. The right choice depends on the construct being measured, the stakes of the decision, the characteristics of the audience, and the operational realities of the organization.

For assessment design and development teams, the most effective strategy is usually intentional combination rather than rigid loyalty to one format. Use digital quizzes for frequent feedback, simulations for applied decisions, and live or recorded performance tasks when physical or interpersonal skills must be judged directly. Protect validity by ensuring the format does not introduce irrelevant difficulty. Protect fairness with accessibility planning, accommodation procedures, and bias review. Protect trust with transparent scoring rules, security controls proportionate to stakes, and continuous evaluation of item and test performance over time.

If you are reviewing your assessment formats now, begin with a simple audit: what decision is each assessment supporting, what evidence is truly needed, and does the current format capture that evidence efficiently and fairly? That question will lead you to better choices than any blanket preference for paper or screen. From there, build out the rest of this assessment formats hub by exploring the specific methods most relevant to your learners, candidates, or employees, and refine each one with evidence, not habit.

Frequently Asked Questions

What is the main difference between traditional and digital assessment formats?

The main difference is how evidence of learning or skill is delivered, completed, and scored. Traditional assessment formats usually include paper-based tests, handwritten essays, oral exams conducted in person, and other formats that rely on physical materials or face-to-face administration. Digital assessment formats use computers, tablets, or online platforms to present tasks and capture responses, whether through online quizzes, simulations, adaptive tests, e-portfolios, or remotely proctored exams. That difference in delivery affects much more than convenience. It influences speed of scoring, test security options, accessibility features, the kinds of skills that can be measured, and how easily results can be analyzed.

In practice, traditional formats are often valued for familiarity, simplicity, and lower technical dependence. They can work well in settings where internet access is limited or where handwritten, spoken, or in-person performance is important to observe directly. Digital formats, however, offer clear advantages when organizations need faster turnaround, scalable delivery, automated scoring, richer analytics, and more flexible item types. For example, a paper multiple-choice exam may be straightforward to administer, but an online adaptive test can adjust question difficulty in real time and provide more precise measurement with fewer items. Neither format is automatically better in every context. The strongest choice depends on the purpose of the assessment, the population being assessed, the resources available, and the decisions that will be made from the results.

Which assessment format is more valid and reliable: traditional or digital?

Validity and reliability do not come from the format alone; they come from how well the assessment is designed, delivered, and interpreted for its intended use. A poorly written digital test is not more valid just because it uses technology, and a paper exam is not inherently more reliable just because it is familiar. Validity asks whether the assessment actually measures what it claims to measure and supports the decisions made from the scores. Reliability asks whether results are consistent enough to be trusted. Both traditional and digital formats can be highly valid and reliable when they are aligned to clear learning objectives, use appropriate item types, apply consistent administration procedures, and are supported by sound scoring methods.

That said, the format can influence validity and reliability in important ways. Digital formats may improve reliability through standardized delivery, automated timing, consistent instructions, and reduced human scoring error for objective items. They can also enhance validity when the target skill is best demonstrated in a digital environment, such as software use, data analysis, interactive problem solving, or decision-making in a simulation. Traditional formats may better support validity when the construct involves handwriting, live oral communication, physical demonstration, or contexts where technology would interfere with authentic performance. The key is fit. If the assessment format changes the nature of the task in a way that distorts the underlying skill being measured, validity suffers. Decision-makers should evaluate not just the surface format, but also test blueprinting, accessibility, scoring quality, administration conditions, and evidence that results are accurate and fair.

What are the biggest advantages of digital assessments compared with traditional ones?

Digital assessments offer several major advantages, especially in environments that need speed, scale, flexibility, and richer data. One of the biggest benefits is efficiency. Online delivery can reduce printing, shipping, and manual handling, while automated scoring can dramatically shorten the time between assessment and results for selected-response items. Digital systems also make it easier to administer tests across multiple locations, support remote participation, and collect performance data at a detailed level. Instead of receiving only a total score, educators and employers can often review item-level results, time-on-task data, domain performance, and trends across groups, which supports better instructional and talent decisions.

Another major advantage is expanded assessment capability. Digital platforms can present interactive tasks that are difficult or impossible to replicate on paper, including drag-and-drop items, branching scenarios, multimedia prompts, coding tasks, virtual labs, and adaptive testing. They can also improve accessibility when designed well, offering features such as adjustable font sizes, text-to-speech, color contrast controls, keyboard navigation, extended time settings, and compatibility with assistive technologies. In addition, digital assessments are easier to update, version, and secure through item randomization, lockdown browsers, identity verification, and audit trails. These strengths make digital formats especially valuable when organizations want agile testing programs, more personalized measurement, and faster feedback loops. Still, those advantages are fully realized only when the technology is reliable, users are prepared, and the assessment experience is designed to minimize technical barriers and unnecessary cognitive load.

When are traditional assessment formats still the better choice?

Traditional assessment formats remain the better choice in many situations, particularly when simplicity, authenticity, or equity of access is the priority. If learners or candidates do not have reliable access to devices, internet connectivity, or a suitable testing environment, paper-based or in-person formats may be more practical and fair. Traditional methods can also be preferable when the skill being evaluated is inherently non-digital. For example, an in-person oral exam may provide the clearest evidence of spontaneous speaking ability, and a handwritten response may be relevant in contexts where written production without technological assistance matters. In some high-stakes settings, face-to-face observation may also strengthen confidence in identity verification and reduce concerns about remote proctoring privacy or technical disruptions.

Traditional formats can also support better performance when the audience is less comfortable with technology or when digital navigation could introduce construct-irrelevant difficulty. If a test is intended to measure subject knowledge rather than computer fluency, forcing a digital format on unprepared participants can skew results. In addition, some educators and evaluators prefer traditional formats for open-ended tasks that benefit from slower reflection, personal interaction, or nuanced qualitative judgment. Oral defenses, studio critiques, practical demonstrations, and paper annotations may still provide richer evidence than a standardized online form. The broader lesson is that traditional does not mean outdated. In many cases, it means direct, stable, and appropriately matched to the assessment purpose. The best assessment strategy often includes traditional elements where they preserve authenticity, reduce access barriers, or improve confidence in the results.

How should educators, employers, and training teams choose between traditional and digital assessment formats?

The most effective way to choose is to start with purpose, not platform. Assessment leaders should first define what they need to measure, why they need to measure it, and what decisions will be made from the results. Are they screening applicants, certifying competence, monitoring progress, diagnosing gaps, or evaluating readiness for a specific role or course? Once the purpose is clear, they can identify the evidence required. Some goals are well served by efficient selected-response testing, while others demand essays, performance tasks, portfolios, simulations, or live demonstrations. From there, the format should be selected based on alignment with the construct, the characteristics of the test takers, operational constraints, accessibility obligations, scoring needs, and the stakes of the decision.

A strong decision process also weighs practical and ethical considerations. Teams should ask whether the format is accessible to all participants, whether it introduces unnecessary bias, whether administrators can support it consistently, and whether the available technology is dependable enough for the context. They should examine security requirements, turnaround expectations, budget, staffing, and the need for analytics or rapid reporting. Pilot testing is especially valuable because it reveals usability issues, timing problems, technical failures, and scoring inconsistencies before full implementation. In many cases, the smartest solution is not choosing one side exclusively, but building a blended assessment strategy. For example, a program might use digital quizzes for rapid formative feedback, simulations for applied skill checks, and in-person demonstrations or interviews for final validation. The best format is the one that produces credible, fair, and useful evidence while respecting the realities of the people taking and using the assessment.