Computer-based testing is the delivery, administration, scoring, and reporting of assessments through digital devices rather than paper booklets. In practice, that means candidates answer questions on a desktop, laptop, tablet, or secure kiosk, while the testing system handles timing, navigation, response capture, and often at least part of the scoring workflow. Within assessment design and development, computer-based testing sits at the center of modern assessment formats because it affects item writing, test security, accessibility, psychometrics, operations, and candidate experience all at once. When organizations ask what computer-based testing is, they are usually also asking what it changes compared with paper testing, when it is the right format, and how it connects to online exams, remote proctoring, adaptive testing, simulations, and performance tasks.

I have worked on transitions from paper forms to digital delivery for certification, licensure, and education programs, and the pattern is consistent: teams initially view the shift as a technology project, then realize it is an assessment design project with technical dependencies. The format changes the evidence you can collect. It changes the speed of score reporting. It changes the way accommodations are delivered. It changes the risks, from browser lock-down failures to item harvesting at scale. Most importantly, it changes what kinds of knowledge and skills can be measured well. A multiple-choice test on paper can become a richer exam on screen with hot spots, drag-and-drop classification, spreadsheet tasks, audio prompts, or branching scenarios, but only if the blueprint, item specifications, and scoring rules are deliberately redesigned.

As a hub within assessment formats, this article explains the core definition of computer-based testing, the major delivery models, the common item types, the operational benefits and limitations, and the design decisions that separate a usable digital exam from a frustrating one. If you are comparing assessment formats for a program, this is the starting point. It gives you the vocabulary and decision criteria needed before you go deeper into related topics such as fixed-form exams, linear-on-the-fly testing, remote proctoring, item banking, technology-enhanced items, simulation-based assessment, and accessibility in test delivery.

How computer-based testing works in practice

Computer-based testing, often abbreviated CBT, covers several delivery arrangements. A test may be administered at a physical test center on managed workstations, at a school or workplace using local devices, or remotely through a web platform with or without live or automated proctoring. The defining feature is not location but digital administration. The system presents items, records responses, enforces timing rules, stores metadata such as response time and navigation events, and transmits data for scoring and reporting. In more advanced implementations, the system also randomizes content, assembles forms from an item bank, or adapts the difficulty level based on candidate performance.

A typical CBT workflow starts with an assessment blueprint that specifies the content domains, cognitive demands, and form constraints. Item writers then develop questions against item specifications, often in an authoring platform that supports metadata tagging for domain, difficulty, stimulus type, exposure limits, and accessibility features. Test assembly follows. Some programs publish fixed forms, while others use multiple equivalent forms or algorithmic assembly. Before launch, teams validate rendering across devices, browsers, and assistive technologies, run usability checks, and establish operational rules for authentication, breaks, incident handling, and score release. During administration, the platform must protect data integrity, maintain uptime, and preserve a complete audit trail.

The strongest programs treat delivery technology and measurement design as inseparable. For example, if a mathematics exam includes equation editors or graphing tasks, the team must define whether mathematical precision is judged by a rubric, an exact parser, or both. If a language exam includes listening items, the platform must control audio playback and bandwidth assumptions. If a clinical certification includes scenario-based tasks, the design team must decide whether the simulation measures decision quality, process efficiency, or both. These are not cosmetic decisions. They determine validity, reliability, fairness, and operational cost.

Major assessment formats within computer-based testing

Computer-based testing is not a single format. It is a delivery environment that supports several assessment formats, each suited to different measurement goals. The most common is the fixed-form exam, where every candidate receives the same set of items or one of several parallel forms. Fixed forms remain standard in education and credentialing because they simplify equating, review, and post-administration analysis. A second format is linear-on-the-fly testing, where each candidate receives a unique form assembled in real time from a calibrated item bank according to blueprint rules. This reduces overexposure of specific items while preserving content balance.

Adaptive testing is another major format. In computerized adaptive testing, the algorithm selects the next item based on the candidate’s current estimated ability. Properly implemented, adaptive testing can shorten exams while maintaining measurement precision. Large testing programs use item response theory models, exposure controls, and content balancing constraints to make this work safely. However, adaptive testing demands strong psychometric calibration, larger item pools, and careful communication with candidates who may perceive the exam as inconsistent because different people see different questions.

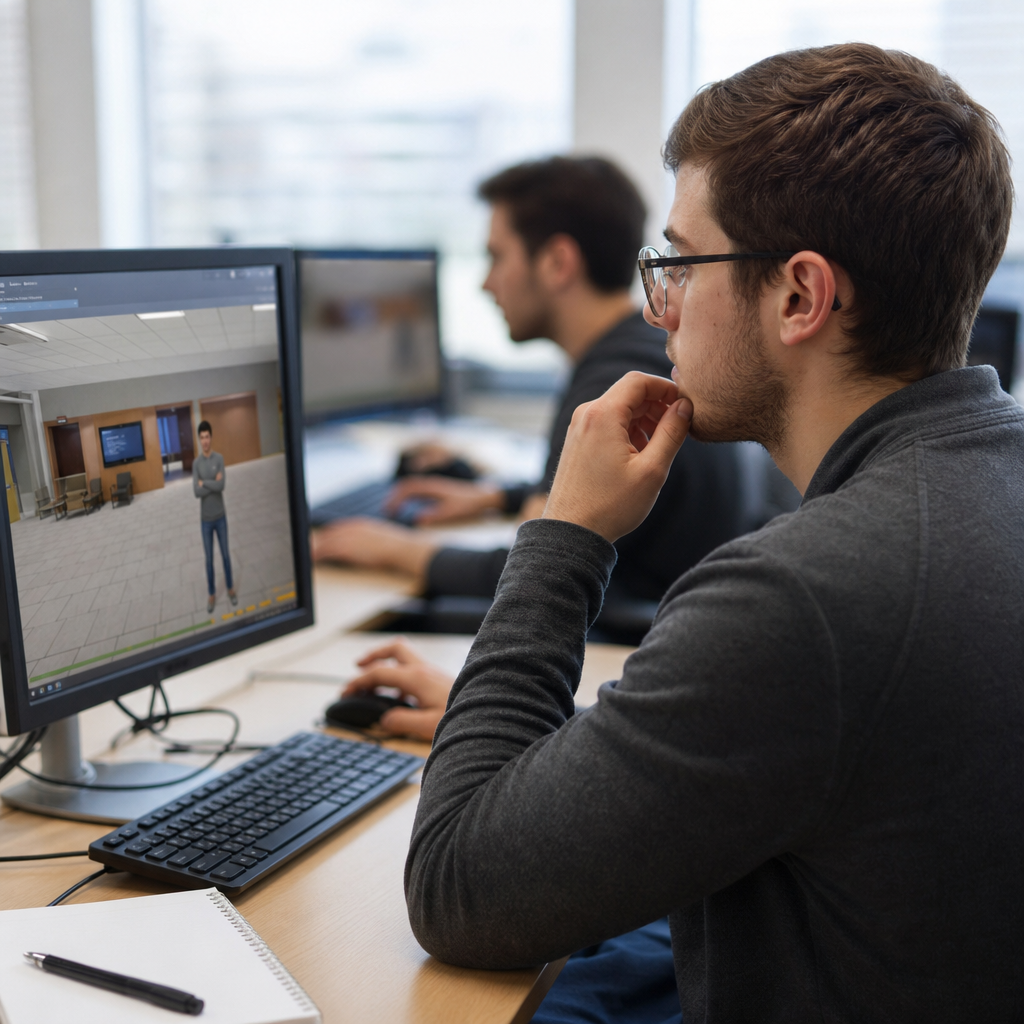

Beyond selected-response formats, CBT supports technology-enhanced items and performance-based tasks. Technology-enhanced items include drag-and-drop, hot spot, matching grids, graphing, numeric entry, file interaction, and multimedia interpretation. Performance tasks can ask candidates to analyze a case, complete workflow steps in a simulated interface, or produce a written or spoken response. These formats are especially valuable when the construct includes applied decision-making, procedural fluency, or communication. They also increase design complexity, scoring cost, and accessibility obligations.

| Format | Best use | Main advantage | Main limitation |

|---|---|---|---|

| Fixed-form CBT | Stable programs with established blueprints | Simple administration and review | Higher item exposure risk |

| Linear-on-the-fly | Large item banks and frequent testing windows | Unique forms with content control | More assembly and quality control work |

| Adaptive testing | Precise measurement across wide ability ranges | Shorter tests with efficient targeting | Requires strong calibration and communication |

| Simulation or performance task | Applied skills and decision processes | Higher fidelity evidence | Higher development and scoring cost |

Choosing among these assessment formats depends on the claim you want the score to support. If the decision is pass or fail for a regulated credential, comparability, security, and defensibility usually outweigh novelty. If the goal is classroom diagnosis, richer interactions and immediate feedback may matter more. If the target skill is software proficiency, a simulated environment can measure authentic performance better than a bank of recall questions. The point is straightforward: computer-based testing expands the menu of possible formats, but the right format is the one that best matches the intended interpretation of scores.

Benefits of computer-based testing for programs and candidates

The biggest operational benefit of computer-based testing is control. Digital delivery makes it easier to manage timing rules, randomize content, enforce workflow constraints, and capture complete response data. Programs gain faster scoring and reporting, especially when selected-response items can be machine scored immediately. In high-volume environments, that speed matters. Certification bodies can process candidates more efficiently. Schools can shorten the cycle between testing and instructional response. Employers can integrate results into hiring or training systems without manual data entry.

CBT also supports richer evidence collection. On paper, an answer sheet tells you what option the candidate selected. On screen, the system can record sequence, latency, flagging behavior, revisits, keystrokes in constructed response, and interactions with tools. Not all of that data should influence scoring, but it is useful for usability analysis, irregularity review, and research. For example, unusually fast responding across difficult items can signal disengagement or possible preknowledge. Long dwell time on a confusing interface may signal a design flaw rather than a knowledge gap.

For candidates, the benefits often include convenience, flexible scheduling, clearer presentation, and faster results. Accessibility supports can be integrated more consistently than in paper workflows. Common accommodations include adjustable font size, color contrast controls, screen reader compatibility, keyboard navigation, extended time, and separate room settings at test centers. Digital platforms can also reduce clerical errors by preventing accidental skipped items when the design requires completion, though that must be balanced against the need to allow strategic skipping when the construct permits it.

Another practical advantage is multimedia. A paper test cannot authentically deliver pronunciation clips, software interfaces, animated processes, or dynamic data displays. A computer-based test can. That matters when the construct includes listening comprehension, data interpretation, or process monitoring. In one workforce assessment redesign I supported, moving from paper screenshots to an interactive spreadsheet task improved alignment with actual job demands and reduced complaints from subject matter experts who had long argued that the old format measured memorization more than competence.

Limitations, risks, and common implementation mistakes

Computer-based testing is not automatically better than paper. The most common mistake is assuming digital delivery improves validity by itself. It does not. A poorly written multiple-choice item remains poor on screen, and a flashy interaction can introduce construct-irrelevant variance if success depends on mouse dexterity, screen size, or familiarity with a specific interface rather than the intended skill. If candidates fail because the drag-and-drop widget is confusing, the test is measuring usability friction, not competence.

Security risk also changes shape in CBT. Paper forms can be stolen or photocopied, but digital items can be harvested rapidly through screenshots, memorization networks, browser exploits, or organized proxy testing. Programs need layered controls: identity verification, secure browsers where appropriate, proctoring protocols, exposure monitoring, forensic analysis, and disciplined item refresh cycles. Remote delivery introduces additional complexity because home networks, personal devices, and private spaces vary widely. Some programs overcorrect with intrusive controls that raise fairness and privacy concerns without materially improving security.

Technical failure is another real constraint. Uptime, autosave behavior, recovery after disconnects, and compatibility across operating systems are not side details. They are part of exam validity because interruptions change candidate performance. Established programs define service-level expectations, test launch procedures, rollback plans, and incident classifications before administration begins. They also pilot with realistic device conditions instead of ideal lab environments. I have seen seemingly minor issues, such as browser zoom behavior or hidden scroll bars, create measurable score impacts when they affect large groups of candidates.

Cost can also surprise teams. While CBT reduces printing and shipping, it introduces spending on platform licensing, psychometric support, item banking, test center contracts or proctoring, accessibility remediation, and continuous quality assurance. Simulation-based assessment is especially resource intensive because every branch, asset, and scoring rule must be designed, tested, and maintained. Programs should evaluate total cost of ownership, not just launch cost. A sustainable assessment format is one the organization can secure, update, and defend year after year.

Design principles that make computer-based tests effective

Effective computer-based testing starts with a clear construct definition. Before choosing item types, define what knowledge, skill, or ability the assessment is intended to measure and what evidence would support that claim. Then select the simplest format that captures that evidence well. Selected-response items remain highly effective for many knowledge and judgment constructs. Technology-enhanced items are justified when they increase fidelity, not when they merely look modern. This principle keeps development efficient and reduces avoidable accessibility barriers.

Usability is a measurement issue, not just a design preference. Navigation should be predictable, instructions brief and specific, and tools consistent across items. Candidates should not have to relearn the interface on every screen. High-stakes programs usually provide tutorials or practice tests so examinees can experience the controls before test day. That is not a courtesy alone; it reduces construct-irrelevant variance caused by unfamiliarity. Standards such as the Web Content Accessibility Guidelines help, but accessibility in assessments goes further because timing, focus order, reading load, and alternate representations directly affect score interpretation.

Scoring design deserves equal attention. Machine scoring must be transparent and validated, especially for numeric entry, short text, and simulated actions. Human scoring for essays, spoken responses, or case analyses requires rubrics, scorer training, calibration, and ongoing monitoring for drift. Many programs use a hybrid model in which automated scoring handles routing or first-pass evaluation, while human scorers review edge cases or samples for quality control. The choice depends on stakes, construct complexity, and tolerance for false positives and false negatives.

Finally, a strong CBT program closes the loop with analytics. After administration, teams should review item statistics, differential performance across groups, incident logs, completion times, and candidate feedback. If a new item type shows unusual omission rates, that may indicate ambiguity or poor rendering. If a content domain underperforms compared with prior forms, the issue may be blueprint drift or instruction clarity rather than candidate ability. Continuous review is what turns computer-based testing from a delivery channel into a disciplined assessment system.

How this hub connects to the broader assessment formats landscape

As a hub for assessment formats, computer-based testing should be understood as the framework that links several specialized topics. Fixed-form testing addresses comparability and operational simplicity. Adaptive testing addresses efficiency and measurement precision. Technology-enhanced items address richer evidence capture. Simulation-based assessment addresses authenticity. Remote proctoring addresses distributed delivery. Item banking and test assembly address scalability. Accessibility addresses fairness. Each deserves its own detailed treatment, but all rely on the same foundational decisions about construct definition, blueprinting, security, scoring, and user experience.

The practical takeaway is that format selection should never start with the platform demo. It should start with the decision the assessment must support, the evidence required for that decision, the candidate population, and the operational realities of delivery. Computer-based testing is powerful because it can accommodate many assessment formats in one ecosystem, from simple fixed forms to complex simulations. Use that flexibility carefully. Map the format to the construct, test it under real conditions, and measure its consequences after launch. If you are designing or updating an assessment program, use this hub as your entry point, then explore the linked topics in assessment design and development to choose the format that is valid, secure, accessible, and sustainable for your context.

Frequently Asked Questions

What is computer-based testing, and how does it work?

Computer-based testing, often called CBT, is the process of delivering, administering, scoring, and reporting assessments through digital devices instead of traditional paper booklets. Rather than filling in bubbles with a pencil, test takers complete an exam on a desktop computer, laptop, tablet, or secure testing kiosk. The testing platform presents questions on screen, records responses in real time, manages timing rules, controls navigation, and often supports automated scoring for selected-response items such as multiple-choice, matching, or drag-and-drop questions.

In practical terms, CBT is more than simply putting a paper test onto a screen. A well-designed computer-based assessment includes a delivery system that determines how questions appear, a user interface that guides the candidate through the exam, security controls that protect test content, and reporting tools that compile results quickly and accurately. Depending on the exam, the platform may also randomize item order, enforce section time limits, prevent backward navigation, or integrate multimedia such as audio, video, simulations, or interactive task types.

Because the entire testing process is managed digitally, computer-based testing plays a central role in modern assessment design and development. It affects how items are written, how test forms are assembled, how accommodations are delivered, how data is collected, and how results are interpreted. In other words, CBT is not just a delivery method; it is a framework that shapes the overall assessment experience from start to finish.

How is computer-based testing different from paper-based testing?

The main difference is the delivery environment. In paper-based testing, candidates receive printed materials, respond by writing or marking answers manually, and rely on proctors and later scoring teams to process the exam. In computer-based testing, the platform itself manages many of these functions automatically. It displays the questions, captures each response immediately, tracks elapsed time, and can score at least part of the assessment much faster than a paper workflow allows.

That difference has major implications for both test takers and assessment organizations. For candidates, CBT often creates a more guided and structured experience. On-screen tools may include countdown clocks, flagged-question features, zoom controls, calculators, highlighters, or accessibility supports such as screen readers and adjustable text size. For testing programs, CBT can reduce printing and shipping demands, improve result turnaround times, and generate richer performance data, including response patterns and timing information.

At the same time, the two formats are not always identical in how they feel. Reading long passages on screen can be different from reading them on paper, and some candidates are more comfortable with one medium than the other. Test developers must account for these differences when designing the exam to ensure fairness, usability, and score comparability. A strong computer-based testing program is carefully planned so that the digital format supports the intended skills being measured rather than distracting from them.

What are the main benefits of computer-based testing?

Computer-based testing offers several important advantages, which is why it has become the standard for many educational, certification, licensure, and employment assessments. One of the biggest benefits is efficiency. Because responses are captured digitally, organizations can streamline administration, scoring, and reporting. Results for many tests can be delivered much faster than with paper-based methods, especially when the exam includes automatically scored item types.

Another major benefit is flexibility in assessment design. CBT makes it possible to use question types that are difficult or impossible to deliver on paper, including interactive simulations, technology-enhanced items, audio-based listening tasks, video prompts, and scenario-based exercises. This allows exams to measure knowledge and skills in ways that can feel more authentic and aligned with real-world tasks. In some testing programs, computer delivery also supports adaptive testing, where the exam adjusts to the test taker’s performance level in order to estimate ability more efficiently.

CBT also strengthens operational control. Testing systems can standardize timing, enforce security settings, randomize content, and create detailed audit trails. Accessibility can often be improved as well through built-in accommodations such as color contrast settings, enlarged text, screen magnification, or text-to-speech features when appropriate. Finally, digital testing gives assessment teams access to detailed data that can be used for psychometric analysis, quality assurance, and continuous improvement. When implemented well, computer-based testing can make exams faster, more secure, more scalable, and more informative.

Are computer-based tests more secure and reliable?

Computer-based tests can be more secure and reliable than paper exams, but that depends on how well the testing system is designed and managed. On the security side, digital delivery enables features such as secure logins, browser lockdown tools, encrypted data transmission, remote monitoring options, item randomization, access controls, and detailed logging of candidate activity. These features can help reduce certain risks associated with paper testing, such as lost materials, unauthorized copying, or errors in manual handling.

Reliability also benefits from automation. A computer-based platform can apply timing rules consistently, present standardized instructions, capture responses precisely, and reduce clerical mistakes that sometimes occur in paper-based administration. For many item types, machine scoring improves consistency and speed. The system can also support immediate validation checks, such as alerting users if a response is incomplete or ensuring that required sections are submitted properly before the test ends.

That said, CBT introduces its own responsibilities. Technical failures, connectivity problems, device incompatibility, and poor interface design can affect the testing experience if they are not addressed in advance. Reliability depends on strong infrastructure, careful usability testing, backup procedures, and clear support protocols. Security depends on item protection, proctoring standards, candidate authentication, and responsible data governance. So while computer-based testing offers powerful tools for secure and dependable assessment, its success ultimately comes from thoughtful implementation rather than technology alone.

What should test takers expect when preparing for a computer-based test?

Test takers should expect an exam experience that involves both content knowledge and familiarity with the testing interface. In most cases, candidates will log into a secure platform, review instructions on screen, and move through the exam using navigation buttons rather than turning physical pages. They may encounter features such as flagged questions, progress indicators, on-screen calculators, digital notepads, or review screens before final submission. Understanding how these tools work can reduce anxiety and help candidates focus on the actual subject matter being tested.

Preparation should include more than studying the content. It is also wise to practice with sample questions or tutorial exams that mirror the real digital environment. This helps candidates become comfortable reading on screen, selecting answers accurately, managing time with on-screen clocks, and using any built-in tools. If the test includes special item types such as drag-and-drop, hot spot, audio response, or simulations, practice is especially valuable because these formats may require different response strategies than traditional multiple-choice questions.

Test takers should also pay attention to logistics. They should confirm device requirements if testing at home, understand the check-in process if testing at a center, and review any policies related to identification, breaks, prohibited materials, and accommodations. If accessibility supports are needed, those arrangements should be requested well in advance. The most successful approach is to treat computer-based testing as both an academic task and a digital experience. When candidates know the content, understand the interface, and arrive prepared for the procedures, they are far more likely to perform with confidence.