Portfolio assessment design strategies help educators and learning teams evaluate complex performance through curated evidence rather than one-time tests. In practice, a portfolio is a structured collection of student work, reflections, feedback, and revisions gathered over time to show growth, proficiency, and readiness for authentic tasks. I have used portfolio systems in higher education, workforce training, and program accreditation projects, and the pattern is consistent: when the design is rigorous, portfolios reveal dimensions of learning that selected-response exams cannot capture. They show process, judgment, persistence, and transfer. They also introduce design challenges around consistency, workload, scoring, and technology.

Within assessment formats, portfolio assessment occupies an important position because it bridges formative and summative use. It can document progress during a course, support high-stakes decisions at program milestones, and provide evidence for external reviewers. Common formats include showcase portfolios, developmental portfolios, competency-based portfolios, capstone portfolios, and professional practice portfolios. Each format answers a different question. Is the learner presenting best work, demonstrating growth, proving mastery against standards, or preparing for employment or licensure? Good design starts by naming that purpose clearly. Without a clear purpose, portfolios become digital scrapbooks: busy, impressive-looking, and weak as assessment evidence.

This hub article explains how to design portfolio assessments that are valid, reliable, manageable, and useful. It covers core decisions on purpose, evidence selection, rubric construction, scoring workflow, feedback cycles, platform choices, and quality assurance. It also situates portfolio assessment among other assessment formats, because a portfolio should rarely stand alone. The strongest systems combine portfolios with performance tasks, oral defenses, observations, quizzes, and self-assessment. Used well, portfolio assessment design strategies improve instructional alignment, make student learning visible, and support more defensible decisions about achievement.

What portfolio assessment is and when to use it

Portfolio assessment is best defined as a method for evaluating learning through a deliberately assembled body of evidence aligned to explicit outcomes. The key word is deliberately. Students should not simply upload everything they produce. Instead, they select or complete artifacts that map to criteria, include contextual notes, and often add reflection explaining decisions, challenges, and revisions. This makes portfolios especially strong for outcomes such as writing, design, clinical reasoning, teaching practice, problem solving, and professional communication. These are areas where quality depends on judgment and where process matters as much as the final product.

Use a portfolio when you need evidence across time, settings, or modalities. In teacher preparation, for example, a portfolio may include lesson plans, classroom videos, student work samples, assessment analyses, and reflective commentaries to demonstrate professional standards. In engineering, it can document design iterations, calculations, prototype photos, testing data, and post-project analysis. In corporate learning, a leadership portfolio might include project briefs, stakeholder presentations, feedback summaries, and implementation results. In each case, the portfolio captures integrated performance better than a single timed exam. It is less appropriate when the target is narrow factual recall, rapid screening of large cohorts, or strict standardization with minimal scorer judgment.

Choosing the right portfolio format for the assessment goal

The most important design move is selecting the portfolio format that matches the decision you need to make. A showcase portfolio presents strongest work and is useful for exhibitions, interviews, and motivation, but it can overstate proficiency if weak performances are hidden. A developmental portfolio tracks progress through drafts and milestones, making it excellent for coaching and growth evaluation. A competency-based portfolio organizes evidence by standards or outcomes and is the strongest option for certification, accreditation, and mastery progression. Capstone portfolios synthesize learning at the end of a program, often pairing artifacts with a reflective rationale. Professional portfolios emphasize employability and external audiences, so the design should separate assessment requirements from public presentation.

I usually advise teams to begin with the high-stakes question first. Are you judging growth, current level, or readiness for independent practice? That answer determines artifact rules, reflection requirements, and scoring procedures. For instance, in a nursing program assessing clinical judgment, a developmental portfolio with supervisor feedback and revision logs may be more defensible than a showcase portfolio. In an art and design course, a showcase portfolio can work well if paired with criterion-referenced rubrics and an oral critique. In a competency-based bootcamp, requiring one validated artifact per standard prevents bloat and keeps the evidence auditable.

| Portfolio format | Primary purpose | Best use case | Main risk |

|---|---|---|---|

| Showcase | Present best work | Exhibitions, interviews, motivation | Inflated picture of overall performance |

| Developmental | Document progress over time | Coaching, revision-heavy courses | Large scoring workload |

| Competency-based | Verify mastery against standards | Certification, accreditation, mastery programs | Fragmented evidence if standards are poorly defined |

| Capstone | Synthesize program learning | End-of-program decisions | Too much retrospective reflection, not enough direct evidence |

| Professional | Support employability and practice | Career programs, licensure preparation | Confusing assessment needs with marketing needs |

Defining outcomes, evidence, and submission rules

Strong portfolio assessment design starts with measurable outcomes and evidence logic. For each outcome, specify what acceptable evidence looks like, under what conditions it must be produced, and how authenticity will be verified. If the outcome is “communicates technical findings to nonexpert audiences,” acceptable evidence might include a slide deck, an executive summary, and a recorded briefing, all tied to one project. If the outcome is “uses data ethically,” the portfolio might require a data-cleaning log, citation of sources, privacy decisions, and a short ethics commentary. This evidence map is what keeps portfolios from drifting into vague collections.

Submission rules matter more than many teams expect. Set limits on number of artifacts, file types, maximum duration for media, naming conventions, and annotation requirements. Require metadata such as date, context, role, tools used, and degree of collaboration. Clarify whether artifacts can come from prior courses or workplace projects. If collaboration is allowed, require the student to identify their specific contribution. I have seen reliability improve immediately when teams move from “submit a portfolio” to “submit three artifacts, each linked to one outcome, with a 250-word evidence statement and one revision note.” Precision reduces scorer guesswork and improves fairness.

Building rubrics that produce defensible scores

Rubrics are the engine of portfolio assessment. The best portfolio rubrics are analytic, criterion-referenced, and tightly aligned to observable qualities in the evidence. Holistic rubrics can be efficient, but they often hide disagreement because scorers use different mental models of quality. Analytic dimensions such as accuracy, complexity, audience awareness, technical execution, reflection quality, and use of feedback make judgments clearer. Performance level descriptors should distinguish adjacent levels using concrete language. “Uses evidence selectively and explains relevance” is stronger than “good use of evidence.”

Rubric design should also separate product from process when both matter. In writing portfolios, I often score final composition quality separately from revision effectiveness and reflective insight. A polished final piece can mask weak process, while a student who improved substantially may still not meet final performance expectations. Distinguishing these dimensions supports better feedback and more accurate decisions. Anchor samples are essential. Collect examples at each performance level, annotate why they fit, and use them in scorer calibration. If your team cannot explain the difference between proficient and advanced using actual samples, the rubric is not yet ready for operational use.

Balancing validity, reliability, and feasibility

Portfolio assessment often raises a practical question: can a rich format still be rigorous? Yes, but only if design teams manage tradeoffs directly. Validity improves when artifacts reflect authentic tasks, multiple points in time, and direct alignment to outcomes. Reliability improves when evidence requirements are standardized, rubrics are explicit, scorers are trained, and moderation procedures are routine. Feasibility improves when portfolios are scoped tightly, workflows are automated where possible, and the number of scored dimensions is realistic. Problems emerge when institutions maximize all three without compromise. For example, asking for ten artifacts across eight outcomes may seem thorough, but it overwhelms students and scorers and usually reduces quality.

A practical approach is to sample evidence strategically. Require one mandatory artifact for each high-priority outcome, then allow one integrative artifact to cover multiple outcomes where justified. Use staged deadlines so review does not bottleneck at the end of term. For high-stakes use, double-score a subset, adjudicate disagreements, and monitor inter-rater agreement. Generalizability theory and Many-Facet Rasch Measurement can support advanced programs, especially when multiple raters and tasks are involved, but many teams gain substantial improvement simply from calibration sessions and clear decision rules. Rigor comes from disciplined design, not from portfolio size.

Feedback, reflection, and revision cycles

One reason portfolios remain powerful among assessment formats is that they naturally support learning during the assessment process. Reflection is not decorative; it provides evidence of metacognition, decision-making, and transfer. However, reflection prompts must be specific. Instead of asking students to “reflect on your learning,” ask what changed between draft one and draft two, what evidence they rejected and why, how audience needs shaped revisions, or what they would do differently in a new context. Good prompts generate analyzable responses rather than generic self-praise.

Revision cycles should be built into the assessment schedule. In a developmental portfolio, I typically require an early checkpoint, midcourse review, and final submission, with targeted feedback at each stage. Feedback should point to rubric criteria, not just general impressions. Peer review can be valuable when students use a simplified rubric and evaluate anonymized samples first. That training improves the quality of both peer comments and final submissions. There is a tradeoff: too many revisions can obscure what the student can do independently. For summative decisions, define whether feedback is coaching, correction, or resubmission authorization, and keep an audit trail.

Platforms, accessibility, and academic integrity

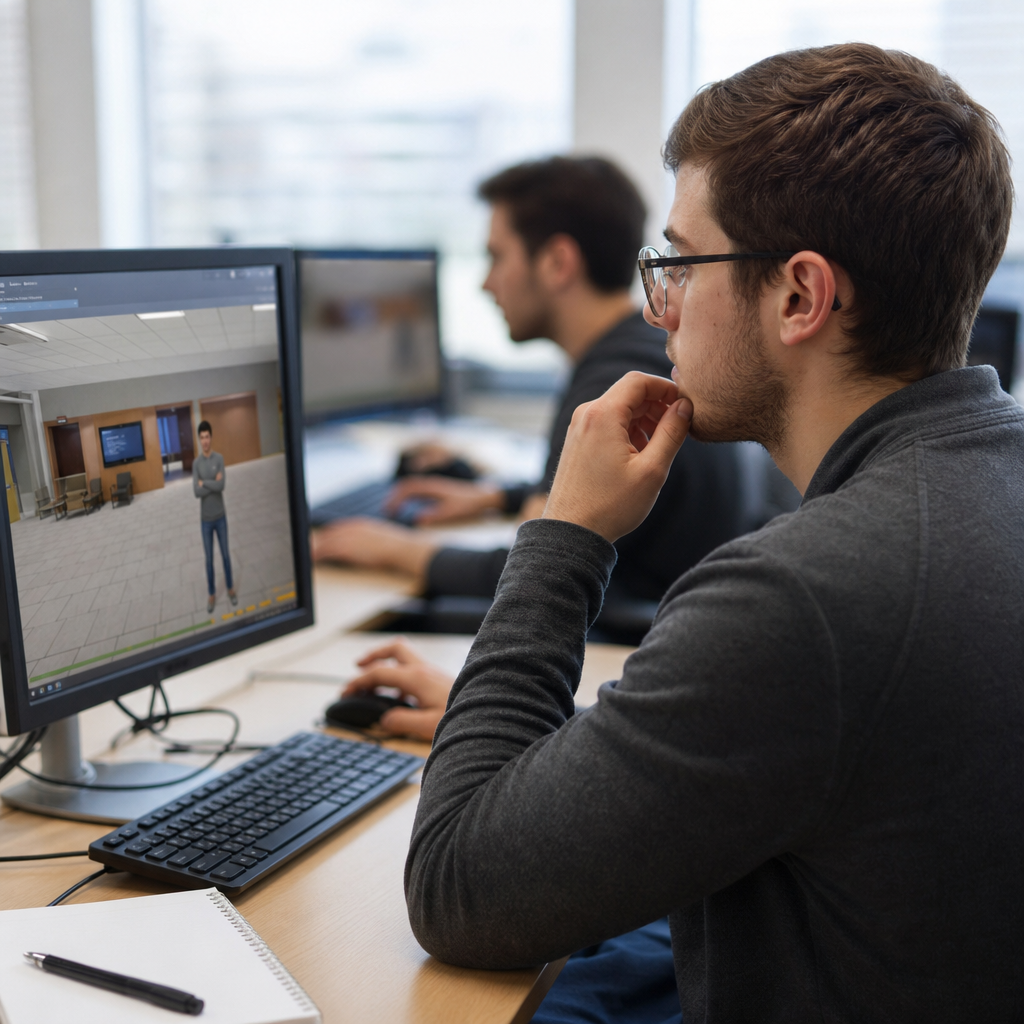

Technology choices shape the student experience and the evidence quality. Common platforms include learning management system tools such as Canvas ePortfolios or Blackboard, dedicated systems like PebblePad and Taskstream, and flexible web builders such as Google Sites, Notion, or Adobe Portfolio. The right platform depends on required media types, rubric integration, privacy controls, export options, and reviewer workflow. For accreditation or licensure, portability and archival access matter. For classroom use, simplicity and low training burden matter more. I have seen elegant public websites fail because evaluators could not score them efficiently or because students lost access after graduation.

Accessibility is nonnegotiable. Portfolio systems should support captions, alt text, keyboard navigation, readable contrast, accessible document formats, and mobile usability. Submission instructions should specify these requirements. Academic integrity requires provenance checks, version history, citation expectations, and, where appropriate, supervisor attestations or live defenses. Artificial intelligence tools complicate authorship, so policies must distinguish acceptable assistance, such as grammar support or brainstorming, from prohibited ghost production. Requiring process artifacts, drafts, and oral explanation is often more effective than relying on detection tools. Authenticity is strongest when the portfolio contains traces of real work, not just polished outputs.

Using portfolio assessment as the hub within assessment formats

As a sub-pillar within assessment design and development, portfolio assessment works best when connected to related assessment formats rather than treated as an isolated method. Performance tasks generate strong portfolio artifacts. Presentations and viva voces help verify authorship and deepen evidence. Simulations can produce decision logs, recordings, and debrief reflections for inclusion. Observation checklists from fieldwork or practicum add external perspectives. Quizzes and short tests still have value for prerequisite knowledge and can complement portfolio evidence. Self-assessment and peer assessment contribute insight, but they should not replace trained evaluator judgment in high-stakes decisions.

This hub perspective matters because many design failures come from using portfolios to do every job. A portfolio should not become the sole measure of knowledge, skill, reflection, professionalism, and attendance all at once. Instead, define what the portfolio is uniquely suited to capture, then link other formats around it. A capstone course, for example, might include milestone proposals, a prototype demonstration, mentor observations, and a final portfolio defense. That structure preserves the richness of portfolio evidence while increasing confidence in scoring decisions. If you are building or revising an assessment system, start by mapping outcomes to formats, then assign the portfolio a focused role with clear rules and rubrics.

Portfolio assessment design strategies succeed when purpose, evidence, scoring, and workflow are aligned from the start. The central lesson is simple: a portfolio is not a folder of student work; it is an assessment format that requires explicit outcomes, curated evidence, analytic rubrics, and disciplined review procedures. When those elements are in place, portfolios can measure complex learning with a level of authenticity that traditional tests rarely achieve. They can also support growth, because students see how quality develops through feedback, revision, and reflection.

For teams working within assessment formats, the practical benefit is flexibility without sacrificing rigor. You can use showcase, developmental, competency-based, capstone, or professional portfolios, but each must match the decision at hand. Keep artifact requirements tight, train scorers with anchor samples, protect accessibility and integrity, and combine portfolios with complementary methods such as performance tasks and oral defenses. If you are developing an assessment system under the broader Assessment Design & Development topic, use this hub as your starting point: clarify the purpose of the portfolio, map evidence to outcomes, and build the scoring process before choosing the platform.

The next step is straightforward. Review one current course or program assessment, identify which outcomes require evidence across time or authentic performance, and redesign that portion as a focused portfolio with clear submission rules and a calibrated rubric. That single change can make learning more visible, feedback more useful, and decisions more defensible.

Frequently Asked Questions

What is portfolio assessment, and why is it more effective than a one-time test for complex learning?

Portfolio assessment is a structured approach to evaluation in which learners collect and curate evidence of their work over time to demonstrate growth, proficiency, and readiness for authentic performance. Rather than relying on a single exam or isolated assignment, a portfolio brings together multiple artifacts such as projects, drafts, reflections, feedback, revisions, presentations, and performance samples. This matters because many important outcomes—critical thinking, applied problem-solving, communication, professional judgment, design ability, and reflective practice—do not show up well in one sitting or in one format. They emerge across repeated attempts, different contexts, and increasingly sophisticated work.

In well-designed systems, the portfolio is not just a folder of completed assignments. It is intentionally aligned to learning outcomes, supported by clear criteria, and used to evaluate patterns of performance. That makes it especially useful in higher education, workforce training, certification pathways, and accreditation settings, where stakeholders need evidence that learners can actually do the work expected in real environments. A portfolio can show the process behind the product, the learner’s capacity to improve, and the transfer of skills across tasks. This richer evidence base often leads to more valid decisions than a one-time test, particularly when the goal is to assess complex competence rather than short-term recall.

What are the most important design elements of an effective portfolio assessment system?

The strongest portfolio assessment systems are built around a small set of core design decisions. First, the portfolio must be anchored to clearly defined outcomes. Educators should identify exactly what learners are expected to know, do, and demonstrate, then map portfolio artifacts directly to those outcomes. Second, the evidence requirements need structure. Learners should know what types of artifacts are required, how many are needed, what quality standards apply, and how those pieces fit together to tell a coherent story of learning. Without that structure, portfolios quickly become inconsistent, difficult to score, and vulnerable to subjective interpretation.

Third, criteria must be transparent. High-quality portfolio design includes rubrics or scoring guides that describe performance levels in plain, observable language. This improves reliability for evaluators and gives learners a clearer target. Fourth, reflection should be built in as a required component, not treated as an optional add-on. Reflective writing helps learners explain why an artifact matters, what feedback they received, how they revised, and what the evidence shows about their development. Fifth, the process should include checkpoints, feedback cycles, and revision opportunities. Portfolios work best when they support learning during the process, not only judgment at the end.

Finally, logistics matter more than many teams expect. An effective design addresses platform selection, file organization, submission timelines, assessor calibration, accessibility, and long-term storage. In accreditation and workforce contexts especially, portfolio systems also need consistency across instructors or reviewers. In short, good portfolio assessment design blends pedagogical clarity with operational discipline. The portfolio should feel meaningful to learners, manageable for instructors, and credible to external audiences.

How do you choose the right artifacts for a portfolio without making it too large or unfocused?

The key is to select artifacts strategically rather than trying to collect everything. A common mistake is treating the portfolio as a complete archive of all student work. That usually creates volume without insight. Instead, each artifact should serve a purpose tied to a specific learning outcome or competency. Start by identifying the claims the portfolio needs to support. If the goal is to demonstrate analytical reasoning, communication, and professional application, then each required artifact should provide evidence for one or more of those areas. This shifts the design from accumulation to argument: the portfolio becomes a curated case for competence.

A balanced portfolio typically includes a mix of polished products, works in progress, and evidence of revision. For example, a learner might include an early draft, instructor or peer feedback, a revised final version, and a reflection explaining what changed and why. That combination is often more informative than a single finished piece because it reveals growth and responsiveness to feedback. In professional or technical programs, performance tasks, fieldwork evidence, simulations, presentations, and annotated work samples can be particularly powerful. In academic settings, research papers, design projects, case analyses, lab reports, and capstone artifacts are common choices.

To avoid sprawl, set explicit limits. Require a defined number of artifacts per outcome, establish formatting and annotation expectations, and ask learners to justify inclusion. Some programs use a “best evidence plus growth evidence” model, where students submit one artifact showing highest performance and one showing development over time. Others require a capstone reflection that ties all artifacts together. The most effective portfolios are selective, purposeful, and interpretable. If an assessor cannot quickly see why an artifact is included and what it demonstrates, the portfolio probably needs tighter design.

How can educators make portfolio assessment fair, reliable, and practical at scale?

Fairness and reliability depend on design discipline. The first step is to define what quality looks like through strong rubrics. Criteria should focus on observable features of performance and distinguish clearly between performance levels. Vague terms like “good” or “adequate” should be replaced with language that identifies what the learner actually does, such as integrates evidence effectively, applies theory appropriately, or justifies decisions with relevant reasoning. When evaluators share that level of clarity, scoring becomes more consistent.

Calibration is equally important. If multiple instructors, faculty members, or reviewers assess portfolios, they need opportunities to score sample work together, compare interpretations, and resolve differences. This process helps normalize expectations and reduces drift over time. Anchor samples at each performance level can further improve consistency. In larger programs, moderation procedures—such as second reads, spot checks, or committee review of borderline cases—can strengthen confidence in decisions. These practices are especially important when portfolio results affect advancement, certification, or accreditation reporting.

Practicality comes from smart constraints and workflow planning. Programs should standardize templates, deadlines, naming conventions, and evidence categories so that assessors are not decoding unique formats for every learner. Digital portfolio platforms can simplify collection and review, but technology alone does not solve design problems. The review process must be realistic in terms of faculty time and assessor load. Many successful systems use milestone reviews during a course or program so learners build the portfolio gradually rather than submitting everything at the end. That approach improves quality and spreads the workload.

Equity should also remain central. Educators need to consider access to technology, clarity of instructions, support for reflective writing, and whether artifact choices disadvantage some learners. Fair portfolio assessment does not mean identical submissions; it means consistent expectations, transparent criteria, and multiple ways for learners to demonstrate competence. When these pieces are in place, portfolio assessment can be both rigorous and manageable, even across large or diverse programs.

What are the most common mistakes in portfolio assessment design, and how can they be avoided?

The most common mistake is lack of purpose. When teams launch a portfolio requirement without deciding exactly what it is meant to assess, the result is usually a confusing collection of materials with limited evaluative value. Portfolios need a clear function: Are they measuring growth, proficiency, readiness for practice, program outcomes, or all of the above? Once the purpose is defined, the evidence, rubric, and review process can be aligned. Without that alignment, learners feel uncertain, assessors score inconsistently, and the portfolio becomes a compliance exercise rather than a meaningful assessment.

Another frequent problem is overcollection. Asking students to upload too many artifacts creates administrative burden and weakens the signal. Reviewers end up sorting through large amounts of low-value material, and learners may focus more on uploading than on selecting and interpreting evidence. This can be prevented by requiring a limited number of high-value artifacts, each accompanied by annotation or reflection. A related mistake is ignoring revision and reflection altogether. If the portfolio contains only final products, it misses one of its greatest strengths: the ability to show learning over time. Reflection and documented revision reveal how learners respond to feedback, refine their thinking, and improve performance.

Programs also run into trouble when scoring criteria are vague or when faculty have not been calibrated. Even strong artifacts cannot produce trustworthy results if assessors are applying different standards. Regular rubric review, sample scoring sessions, and moderation processes solve much of this problem. Finally, some portfolio initiatives fail because the implementation is too cumbersome. If students do not understand the process, if faculty cannot manage the review load, or if the platform is difficult to use, quality drops quickly. The solution is to design for usability from the beginning: simple structure, explicit guidance, staged checkpoints, and support resources for both learners and evaluators.

In practice, the best portfolio systems are not the most elaborate ones. They are the ones that are aligned, selective, transparent, and sustainable. When the design is intentional, portfolios can generate richer evidence than traditional testing while also improving learning, reflection, and program-level decision-making.