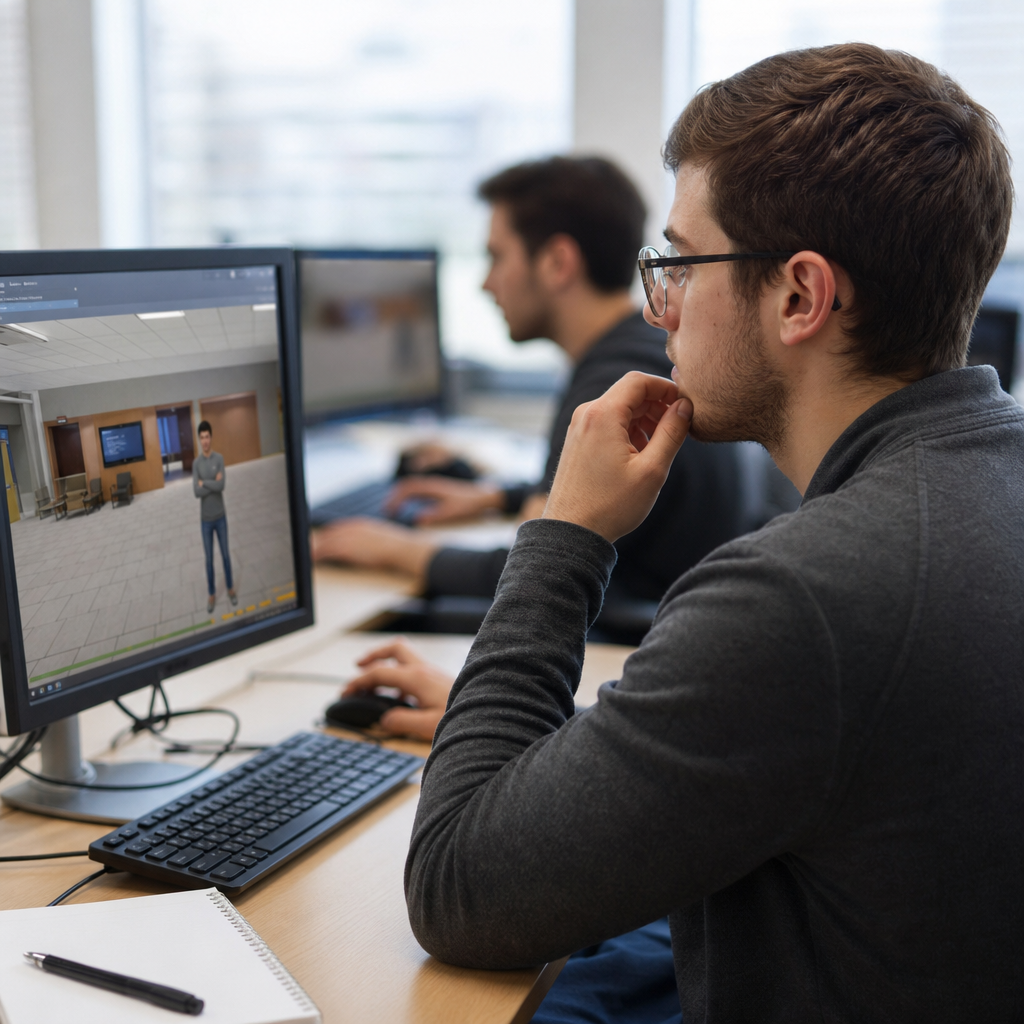

Game-based assessment blends measurement with interactive play, using digital or physical game mechanics to evaluate what learners know, how they think, and how they apply skills under changing conditions. In assessment design and development, the term covers far more than adding points or badges to a quiz. A true game-based assessment embeds evidence collection into tasks, rules, feedback loops, and decision paths so performance data emerges from authentic action. This matters because schools, universities, employers, and training teams increasingly need formats that measure complex competencies such as problem solving, collaboration, persistence, systems thinking, and transfer, not just recall. I have worked on assessment programs where conventional multiple-choice tests produced neat score reports yet failed to explain why candidates struggled in practice. Game-based formats can reveal that missing layer by capturing process data alongside outcomes.

Assessment formats shape what can be measured, how reliable results are, and whether learners experience the task as meaningful or artificial. Within the broader assessment formats landscape, game-based assessment sits alongside selected-response tests, constructed responses, performance tasks, simulations, portfolios, oral exams, and adaptive testing. Its distinct value is contextualized evidence. A learner managing a virtual supply chain, diagnosing a patient in a branching scenario, or negotiating resources in a multiplayer environment generates richer signals than someone selecting one option from four. Those signals may include time on task, strategy shifts, error patterns, hint use, collaboration choices, and final success. When aligned well, game-based assessment improves engagement and yields actionable evidence. When aligned poorly, it becomes an expensive distraction. Understanding both opportunities and challenges is therefore essential for anyone building serious assessment systems.

At its best, game-based assessment answers three questions directly: what competency is being measured, what in-game behaviors count as evidence, and how scores will be interpreted for decisions. Those questions are familiar across all assessment formats, but game environments make them more visible because the mechanics can either support or distort measurement. A well-designed game task can reduce test anxiety, increase persistence, and simulate real-world complexity. A weak design can reward gaming the system, privilege prior gaming experience, or blur the line between entertainment and valid inference. For a hub page on assessment formats, this article maps the field comprehensively: what game-based assessment is, where it works, how it compares with other formats, what technical and psychometric issues matter most, and how to decide whether it belongs in your assessment strategy.

What game-based assessment includes within assessment formats

Game-based assessment is not one format but a family of formats. The simplest version wraps assessment items in a game shell: learners answer questions to advance through levels. This can improve motivation, but it rarely changes what is measured. A more sophisticated format places learners inside an interactive scenario where choices, sequences, and tradeoffs produce evidence. Examples include business simulations, engineering design challenges, cybersecurity capture-the-flag environments, and language-learning quests that require comprehension, production, and strategic communication. There are also stealth assessment models, popularized by work from Valerie Shute and collaborators, where evidence is collected continuously without interrupting gameplay for overt test questions. In practice, most operational programs sit somewhere between quiz gamification and fully embedded simulation.

As a hub under assessment design and development, it helps to position game-based assessment relative to neighboring formats. Compared with multiple-choice testing, it captures process as well as product. Compared with open-ended performance tasks, it can automate scoring and standardize administration more easily. Compared with portfolios, it offers tighter control over the evidence environment. Compared with live simulations, it can be scaled across locations and time zones. Yet each comparison comes with caveats. Automated scoring is only as valid as the evidence model; standardization can be undermined by technical variation; and scale often requires simplifying context. The right question is not whether game-based assessment is better than traditional assessment formats, but which claims it supports more credibly and at what cost.

Organizations use game-based assessment for different purposes. In K-12 settings, it often supports formative assessment by diagnosing misconceptions during learning. In higher education, it appears in clinical, engineering, and teacher preparation simulations where practice and evaluation overlap. Employers use it in hiring and talent development to sample judgment, prioritization, and learning agility. Government and military programs use simulation-based exercises for mission-critical skills where direct testing in the real environment would be costly or unsafe. Across these contexts, the common design principle is evidence-centered design: define competencies, specify observable behaviors, and build tasks that elicit those behaviors under controlled conditions.

Major opportunities: richer evidence, stronger engagement, better fit for complex skills

The biggest opportunity is measurement of complex performance. Traditional selected-response items remain efficient for factual knowledge and some aspects of reasoning, but they struggle to represent dynamic decision making. In a game-based assessment, a learner can be asked to stabilize an ecosystem, triage patients after a disaster, allocate a budget across competing priorities, or debug a software system under time pressure. These tasks generate evidence about planning, monitoring, adaptation, and consequences. Because every action can be logged, designers can build models that distinguish productive persistence from random clicking, or strategic experimentation from confusion. This is especially useful for competencies such as systems thinking, collaboration, and procedural fluency that unfold over time.

Engagement is another genuine advantage when designed responsibly. In projects I have reviewed, completion rates improved when assessments offered clear goals, immediate feedback inside the task, and a coherent narrative frame. Engagement matters because disengaged candidates produce noisy data. If a format reduces boredom and encourages sustained effort, score quality can improve. Research on serious games and simulations consistently shows that motivation increases when tasks feel purposeful and feedback is informative. However, engagement should be treated as a means, not the goal. The assessment benefit is not that learners had fun; it is that they invested enough attention to reveal their actual competence.

Game-based assessment also supports situated judgment. Many professions require people to act under uncertainty with incomplete information. A nurse, project manager, aircraft technician, or school leader rarely faces isolated textbook questions. They interpret cues, weigh tradeoffs, and update decisions as conditions change. A branching scenario or simulation can present these realities in a controlled way. For example, a leadership simulation may require handling an underperforming employee, a budget shortfall, and a stakeholder complaint in one sequence. The resulting evidence is more instructionally useful than a single score because it shows where decision quality improved or deteriorated.

| Assessment format | Primary strength | Best use case | Main limitation |

|---|---|---|---|

| Multiple-choice test | Efficiency and reliability | Knowledge checks at scale | Limited process evidence |

| Constructed response | Explanation and reasoning | Written analysis and justification | Scoring time and consistency |

| Performance task | Authentic application | Complex skill demonstration | Administration and scoring cost |

| Simulation | Safe practice in realistic context | High-stakes procedural judgment | Technical build complexity |

| Game-based assessment | Embedded evidence plus engagement | Dynamic skills, strategy, adaptation | Validity risks if mechanics dominate |

| Portfolio | Longitudinal evidence | Growth over time | Variable comparability |

Design and psychometric challenges that determine whether results can be trusted

The central challenge is validity. A score from a game-based assessment is only meaningful if in-game behavior supports the intended interpretation. That requires a clear chain from competency model to evidence model to task model. If the goal is critical thinking, but success depends heavily on fast mouse control or prior familiarity with role-playing games, the score is contaminated. I have seen prototypes where candidates who understood the content still underperformed because navigation was opaque or the reward structure encouraged shortcuts unrelated to the target skill. The fix is not cosmetic. Designers must isolate irrelevant variance, run cognitive labs, and verify that high performers are succeeding for the right reasons.

Reliability presents a second challenge. Rich environments create more opportunities for inconsistency. Two versions of a scenario may differ in hidden difficulty. Multiplayer tasks can produce unequal experiences depending on teammates. Adaptive pathways improve efficiency but complicate comparability. Standard psychometric tools such as item response theory, generalizability theory, and many-facet Rasch measurement can help, yet they need enough data and careful assumptions. Process data adds power, but also complexity. You may have timestamps, event sequences, chat logs, and resource choices; turning those into stable indicators demands feature engineering, validation studies, and often machine learning models that remain interpretable enough for stakeholders.

Fairness is equally important. Game-based assessment can disadvantage candidates with less gaming experience, lower digital fluency, slower hardware, limited accessibility support, or cultural distance from the scenario. An assessment for hiring or certification must control those risks. Accessibility standards such as WCAG should shape interaction design from the start, not be patched in later. Keyboard navigation, captioning, screen reader compatibility, color contrast, response-time accommodations, and alternative input methods are basic requirements. Fairness reviews should also test narrative assumptions. A scenario framed around niche cultural references may privilege one group without measuring the target competency any better.

Scoring transparency is another pressure point. Stakeholders often trust a conventional rubric more readily than an algorithm derived from gameplay traces. If the scoring model combines decision quality, efficiency, and help-seeking, designers must explain why those features matter and how they were weighted. Black-box models can be useful during research, but operational assessments benefit from interpretable evidence rules. Defensibility matters most in high-stakes settings such as admissions, licensure, and employment. If a candidate challenges a score, the program should be able to show what behaviors were observed, how those behaviors relate to the construct, and what quality-control checks were applied.

Implementation, tools, and where game-based assessment works best

Successful implementation starts small. Instead of building a massive custom game, many teams begin with one high-value competency and one scenario that can outperform an existing format. Tools vary by complexity. Authoring platforms for branching scenarios, such as Articulate Storyline or Twine, support lower-cost pilots. Simulation environments built in Unity or Unreal enable richer interactions but require stronger product management, analytics, and quality assurance. Learning platforms can capture xAPI statements for event-level data, while dashboards in tools such as Power BI or Tableau help translate raw logs into usable reports. For psychometric analysis, R remains the most flexible environment, especially when combining classical test theory, IRT, and sequence analysis.

Game-based assessment works best when three conditions are present. First, the target competency is dynamic and context dependent. Second, meaningful evidence can be observed through actions, not just final answers. Third, the decision at stake justifies the development cost. This is why the format is common in healthcare simulations, operational training, and leadership development, but less useful for straightforward vocabulary checks or basic compliance knowledge. A hospital may gain real value from a sepsis-management simulation because timing, prioritization, and protocol adherence matter. A simple annual policy attestation probably does not require a game environment.

This format is also powerful when paired with other assessment formats rather than used alone. A hiring process might combine a short knowledge test, a game-based judgment exercise, and a structured interview. A course assessment system might use auto-scored quizzes for prerequisite knowledge, game-based tasks for application, and reflective writing for metacognition. That mixed-format approach improves coverage while controlling cost and risk. As the hub for assessment formats, the practical takeaway is clear: no single format captures every claim equally well. Game-based assessment earns its place when it adds unique evidence that simpler formats cannot provide efficiently.

For teams planning adoption, governance matters as much as design. Establish version control for scenarios, define data retention policies, document scoring logic, monitor subgroup performance, and schedule periodic validity reviews. Treat the game as an assessment product, not just a learning experience. That means usability testing with representative users, technical stress testing across devices, and post-launch analysis of score distributions, completion times, and aberrant behavior. If the environment changes, the score meaning may change too. Ongoing maintenance is not optional.

Game-based assessment offers a compelling addition to modern assessment formats because it can capture how people think and act, not just what they can recall on demand. Its strongest opportunities are richer evidence, higher engagement that supports effort, and closer alignment with real-world decision making. Its toughest challenges are equally clear: validity can be undermined by irrelevant game mechanics, reliability is harder in dynamic environments, fairness requires careful accessibility and bias review, and scoring models must remain defensible. These are not reasons to avoid the format. They are reasons to design it with discipline.

For assessment design and development teams, the most useful mindset is selective adoption. Use game-based assessment where complexity, context, and process data genuinely matter. Keep simpler formats where efficiency and comparability are the priority. Anchor every design in explicit competency definitions, evidence rules, and quality standards. When that foundation is in place, game-based assessment can become a practical hub within a broader assessment strategy, connecting simulations, performance tasks, adaptive testing, and traditional measures into one coherent system. If you are reviewing assessment formats for your program, start by identifying one decision that needs better evidence, then test whether a game-based approach can produce it more credibly than the alternatives.

Frequently Asked Questions

What is game-based assessment, and how is it different from simple gamification?

Game-based assessment is an approach to evaluation in which the assessment is built into the game experience itself. Instead of asking learners to stop playing and answer a separate test, the game captures evidence through the choices they make, the strategies they use, the mistakes they recover from, the speed and accuracy of their responses, and how they adapt to new conditions. In other words, the learner demonstrates knowledge and skills through action, not just through selected answers on a traditional test.

This is very different from simple gamification. Gamification usually means adding game-like elements such as points, badges, leaderboards, timers, or rewards to an otherwise conventional activity. A quiz with stars and sound effects may feel more engaging, but it does not automatically become a game-based assessment. A true game-based assessment is designed so that the rules, challenges, feedback loops, and decision pathways generate meaningful evidence about learning. The game mechanics are not decorative; they are central to what is being measured.

For example, if a learner plays a science simulation where they must balance resources, test hypotheses, and respond to changing environmental conditions, the assessment can capture far more than whether they chose the right answer. It can show how they reasoned, whether they used evidence effectively, how persistently they explored options, and how well they transferred prior knowledge to a new scenario. That makes game-based assessment especially valuable when the goal is to understand complex thinking, problem-solving, and applied performance rather than only recall.

What are the main opportunities of using game-based assessment in education and training?

One of the biggest opportunities is that game-based assessment can measure learning in a more authentic and dynamic way. Traditional tests often isolate skills into short, fixed-response questions, while game-based environments can place learners in realistic situations that require them to analyze information, make decisions, revise plans, and manage trade-offs. This gives educators, trainers, and researchers a richer view of what learners can actually do when knowledge must be applied under pressure or uncertainty.

Another major advantage is engagement. Well-designed game-based assessments can increase motivation because learners feel they are participating in a meaningful challenge rather than completing a disconnected test. This does not just make the experience more enjoyable; it can also improve the quality of evidence collected. When learners are immersed and invested, they are more likely to reveal their genuine strategies, persistence, and problem-solving patterns. That makes the resulting data more informative than data gathered from low-engagement testing situations.

Game-based assessment also creates opportunities for continuous and fine-grained measurement. Because digital systems can log every move, pause, retry, and pathway, designers can examine performance at a level of detail that traditional assessments rarely capture. This supports formative use cases, such as identifying misconceptions early, personalizing instruction, or adjusting difficulty in response to learner progress. In workforce and professional settings, it can help evaluate not only technical proficiency but also collaboration, adaptability, risk judgment, and decision quality in simulated environments.

Finally, game-based assessment can support broader definitions of competence. Many modern learning goals involve creativity, systems thinking, resilience, communication, and strategic reasoning. These are difficult to measure with standard item formats alone. By embedding evidence collection into play, designers can better assess how learners navigate complexity, respond to feedback, and transfer skills across contexts. When done well, this makes assessment more aligned with the kinds of capabilities schools, universities, and employers increasingly value.

What are the biggest challenges and risks in designing effective game-based assessments?

The most important challenge is validity. A game-based assessment must produce evidence that actually supports the intended claims about learner ability. If success in the game depends too heavily on familiarity with game controls, prior gaming experience, reading speed, or trial-and-error behavior, then the assessment may measure the wrong things. Designers have to carefully separate the target skill from irrelevant factors so that performance reflects learning outcomes rather than unrelated advantages or disadvantages.

Another major challenge is balancing engagement with measurement quality. A game that is highly entertaining is not automatically a strong assessment, and a highly controlled assessment may lose the qualities that make game-based approaches valuable in the first place. Designers need to build tasks that are both meaningful to play and psychometrically defensible. That means defining constructs clearly, mapping in-game actions to evidence models, testing whether observed behaviors are interpretable, and ensuring that scoring methods are reliable and fair.

Equity and accessibility are also significant concerns. Not all learners have the same familiarity with digital environments, reaction speed, language background, physical abilities, or access to technology. If these issues are not addressed, the assessment can introduce bias. Accessibility features, alternative interaction methods, thoughtful onboarding, and universal design principles are essential. It is also important to examine subgroup performance carefully to ensure that the tool is not unintentionally privileging one population over another.

There are practical challenges as well. Developing a high-quality game-based assessment often requires collaboration among subject matter experts, assessment specialists, game designers, software developers, and data analysts. This can be costly and time-intensive. In addition, stakeholders may be skeptical if the tool appears less formal than a traditional test, even when it generates stronger evidence. Privacy and data governance must also be managed carefully, especially when systems collect detailed behavioral data. Altogether, the promise is real, but the design burden is substantial, and weak implementation can undermine trust quickly.

How do educators and assessment designers ensure that game-based assessment results are reliable and meaningful?

Reliability and meaning start with a clear assessment framework. Before any game elements are developed, designers need to identify exactly what knowledge, skills, or competencies the assessment is intended to measure. They must define the construct, describe what successful performance looks like, and specify which in-game behaviors count as evidence. This prevents the common mistake of building an engaging game first and trying to justify the assessment later. In strong designs, the evidence model leads the gameplay design, not the other way around.

Once the framework is established, tasks and mechanics should be aligned to specific claims. If the goal is to measure scientific reasoning, for example, the game should require learners to generate hypotheses, interpret data, revise assumptions, and make evidence-based decisions. Scoring should then be tied to those actions through transparent rules, rubrics, analytics models, or a combination of methods. Many effective systems use stealth assessment techniques, where evidence is collected continuously in the background, but even then the scoring logic must be grounded in defensible assessment principles.

Piloting and validation are essential. Designers should test the assessment with diverse learners to see whether players interpret tasks as intended, whether the interface causes avoidable confusion, and whether the collected data truly distinguishes levels of proficiency. Statistical analysis, cognitive labs, usability testing, and expert review all play important roles. In some cases, results should be compared with other measures to evaluate convergent evidence. Ongoing calibration is also important because player behavior can change over time as users become more familiar with the system.

Finally, interpretation should be responsible and contextual. Game-based assessment can generate large volumes of data, but more data does not automatically mean better conclusions. Educators need reporting tools that translate gameplay patterns into usable insights, and they need to avoid overclaiming. A well-designed assessment can provide powerful evidence of how learners think and act, but results should still be considered alongside other information such as classroom performance, observations, and complementary assessments. Reliability improves when the system is technically sound; meaning improves when the results are interpreted carefully and used for appropriate decisions.

In what situations is game-based assessment most useful, and when might traditional assessment still be better?

Game-based assessment is especially useful when the goal is to measure complex performance in context. It works well for domains where learners must integrate multiple skills, respond to evolving information, and demonstrate reasoning through action. Examples include scientific inquiry, clinical decision-making, language use in realistic scenarios, systems thinking, teamwork, cybersecurity response, and problem-solving in uncertain environments. In these settings, traditional tests may capture fragments of knowledge, but game-based assessment can show how that knowledge is actually applied.

It is also valuable when educators want formative insights rather than a single score at the end of instruction. Because the assessment can collect evidence continuously, it can reveal where learners hesitate, which strategies they repeat, when they improve after feedback, and what kinds of support they may need. This makes it particularly useful for personalized learning, simulation-based training, and competency-based education. In professional development and workforce settings, it can provide a safer way to evaluate performance in situations that would be too risky, expensive, or impractical to recreate in the real world.

That said, traditional assessment still has important advantages. If the goal is to measure straightforward factual recall, basic procedural fluency, or large-scale performance under highly standardized conditions, conventional formats may be more efficient, easier to administer, and simpler to score consistently. Traditional assessments are often better when time, budget, technical infrastructure, or psychometric comparability are major constraints. They can also be preferable when stakeholders need clear, familiar reporting for high-stakes decisions.

In practice, the best choice is often not either-or but both-and. Game-based assessment should be seen as one powerful tool within a broader assessment strategy. It is most effective when used where its strengths matter most: authentic performance, rich process data, and applied decision-making. Traditional methods remain useful for