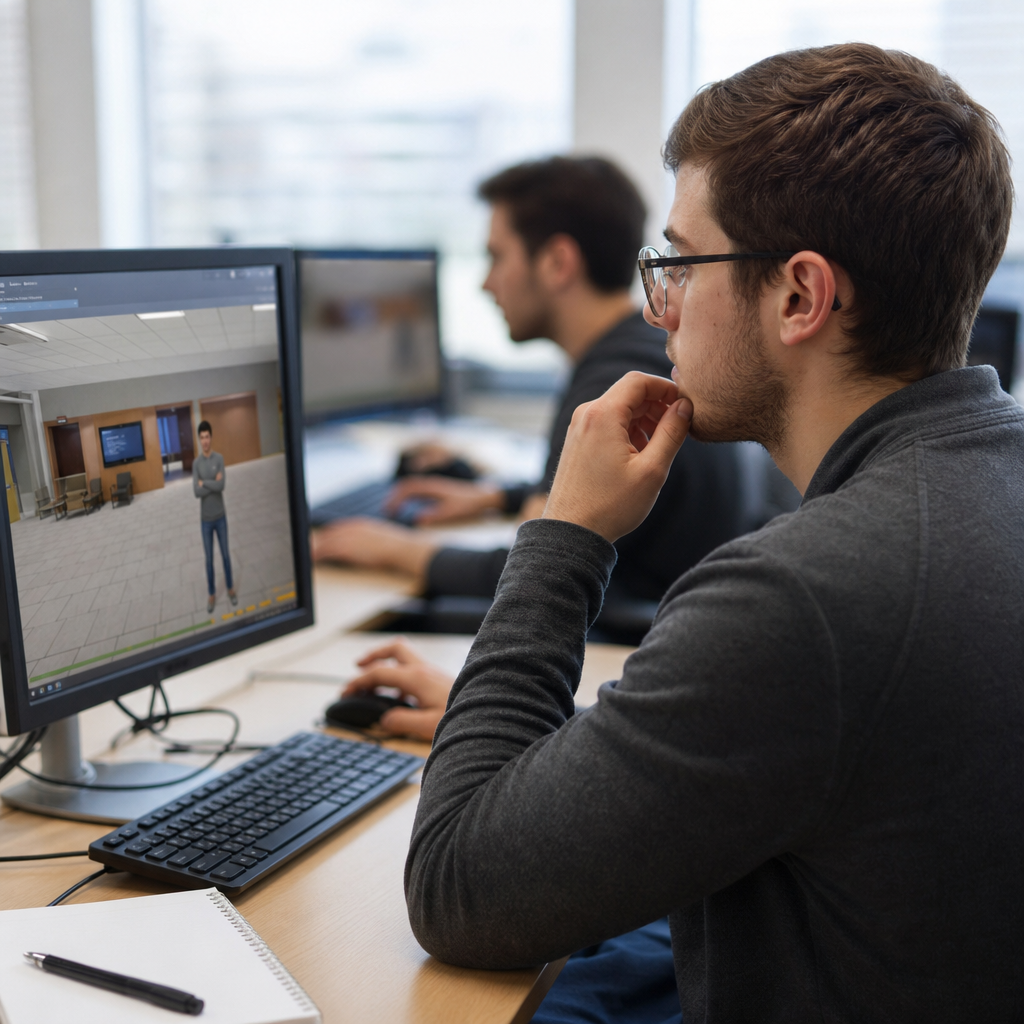

Simulation-based assessments measure what people can do in realistic situations, not just what they can recall on a test. In assessment design and development, this format has become a core option because it evaluates decision-making, procedural skill, judgment, and adaptability inside a controlled environment. A simulation-based assessment can be a virtual patient case, a cybersecurity lab, a flight scenario, a branching customer service interaction, or a manufacturing troubleshooting task. The defining feature is fidelity to real work: candidates respond to conditions, consequences unfold, and scoring is tied to observable actions. As organizations push for stronger evidence of competence, simulation-based assessments sit at the center of modern assessment formats.

In practice, I have seen these assessments outperform traditional item sets when the target skill involves sequence, timing, prioritization, or complex problem solving. A multiple-choice question can ask what a nurse should do first, but a simulation can show whether the nurse notices a deteriorating patient, interprets changing vitals, selects the right intervention, and escalates at the right moment. That difference matters for hiring, certification, licensure, and learning programs. It also matters for fairness and validity. When the job requires applied performance, the assessment format should mirror the job closely enough to support defensible inferences.

This hub article explains simulation-based assessments as a major branch within assessment formats. It covers how they work, where they fit, common design models, scoring approaches, technology choices, quality risks, and use cases across industries. It also clarifies the limits. Simulations are not automatically better than knowledge tests, interviews, or work samples. They are best used when the construct requires contextualized performance and when designers can define clear evidence rules. If you are building an assessment strategy under assessment design and development, this guide will help you decide when to use simulations, how to structure them, and what good implementation looks like.

What a simulation-based assessment is and when to use one

A simulation-based assessment is an evaluation method that places a person in a realistic, structured scenario and captures performance data as the person completes tasks, makes decisions, or interacts with a system. The scenario may be digital, physical, or hybrid. The candidate might diagnose faults in an electrical system, conduct an audit interview with a virtual client, allocate resources during an emergency response, or secure a cloud environment after detecting suspicious activity. Unlike static tests, simulations unfold over time. The candidate receives cues, interprets feedback, and chooses actions that influence later options and outcomes.

The format is most appropriate when success depends on integrated performance. Common targets include clinical reasoning, command decision-making, technical troubleshooting, procedural compliance, situational judgment, communication under pressure, and prioritization. In those cases, the key question is not only whether the candidate knows the rule, but whether the candidate can apply it under realistic constraints. The U.S. National Council of State Boards of Nursing moved toward simulation-enhanced item types in the Next Generation NCLEX because patient care involves layered clinical judgment rather than isolated fact recall. Aviation has used full-flight simulators for decades because aircraft handling, checklist discipline, and crew coordination cannot be fully evaluated through written exams alone.

Simulation-based assessments should not be used just because they look modern. They require time, budget, and careful design. If the construct is simple factual knowledge, a well-built selected-response test is often more efficient and equally valid. If the target is a stable artifact, a portfolio review may be better. If direct observation in the workplace is feasible and standardized, that may deliver stronger evidence with lower development cost. The right choice depends on the claims you need to support, the consequences of the decision, and the operational reality of your program.

How simulation-based assessments compare with other assessment formats

Within assessment formats, simulations sit between traditional tests and full workplace observation. Selected-response exams are efficient, scalable, and psychometrically mature. Constructed-response tasks reveal reasoning but can be slow to score. Structured interviews capture behavioral examples but rely on self-report and interviewer consistency. Work samples show performance directly, though they may be difficult to standardize. Simulations combine elements of all four. They create a controlled setting like a test, elicit observable behavior like a work sample, and can capture reasoning through embedded prompts or action logs.

The key advantage is representativeness. A cybersecurity analyst can be placed in a sandbox containing live indicators of compromise, suspicious processes, and incomplete logs. The assessment can record whether the analyst identifies the threat, contains it, preserves evidence, and communicates risk appropriately. A standard item bank can ask about incident response stages, but it cannot recreate the pressure of triage. Similarly, in customer support, a branching simulation can assess empathy, policy compliance, and de-escalation in a way that a knowledge quiz cannot.

The tradeoff is complexity. Simulations raise development cost, require stronger technical infrastructure, and create more scoring decisions. They also introduce construct-irrelevant variance if the interface is confusing or if candidates differ in unrelated digital fluency. For that reason, expert teams often map the format decision to the construct first. If a competency framework says “prioritizes competing demands under uncertainty,” simulation is a strong candidate. If it says “recalls tax code thresholds,” a direct knowledge measure may be sufficient. The format should serve the evidence need, not the other way around.

Core design models used in simulation-based assessments

Most effective simulations follow a clear assessment design model. The strongest approach starts by defining the claim, the evidence, and the tasks. If the claim is that a maintenance technician can diagnose hydraulic failure safely, the evidence may include fault isolation steps, use of lockout-tagout procedures, instrument interpretation, and time to restore operation without violating safety rules. The task model then defines the scenario, available tools, distractors, constraints, and scoring opportunities. This logic is often formalized using evidence-centered design, a framework widely used in complex assessment programs because it ties every task feature to an inferential purpose.

Fidelity also needs deliberate calibration. High fidelity is not always better. In healthcare, a virtual medication administration scenario may need authentic labels, dosing charts, and interruption events, but it may not need photorealistic graphics if those do not improve the evidence. In my experience, teams often overspend on visuals and underspecify scoring rules. Functional fidelity matters more than cinematic fidelity. The environment must reproduce the cues, decisions, and consequences relevant to the construct. It does not need to replicate every sensory detail of the real world.

Designers also choose between linear, branching, and open simulations. Linear simulations move all candidates through the same sequence, which supports comparability. Branching simulations alter later events based on earlier decisions, which increases realism but can complicate scoring and equating. Open simulations, such as software labs or tactical scenarios, allow broad freedom of action and generate rich telemetry. These can be powerful, but only if the scoring model can distinguish productive strategies from noise. Constraint design, cue timing, and reset logic are central. Poorly constrained simulations produce fascinating interactions and weak measurement.

| Format type | Best use | Main strength | Main limitation |

|---|---|---|---|

| Linear scenario | Standardized certification tasks | High comparability across candidates | Less adaptive realism |

| Branching scenario | Judgment and communication | Consequences reflect choices | Harder scoring and maintenance |

| Sandbox or lab | Technical troubleshooting | Captures authentic workflows | Greater scoring complexity |

| Role-play simulation | Interpersonal performance | Reveals communication behavior | Rater training burden |

Scoring methods, analytics, and validation

Scoring is where many simulation-based assessments succeed or fail. The simplest model uses checklists: did the candidate verify identity, isolate the machine, escalate the incident, or document findings. Checklists are useful for safety-critical steps, but alone they can miss quality differences. More advanced scoring adds weighted actions, sequence rules, time windows, and outcome metrics. In a pharmacy simulation, selecting the correct drug may not be enough; the system may also score dose calculation, allergy verification, patient counseling, and intervention timing. In leadership scenarios, raters may score behavioral indicators such as clarifying goals, acknowledging stakeholder concerns, and making evidence-based tradeoffs.

Digital simulations generate process data that paper tests cannot. Clickstreams, command histories, dwell time, path efficiency, hint use, revision behavior, and communication transcripts can all become evidence, if used carefully. This is where learning analytics and natural language processing often enter the workflow. However, more data does not automatically mean better inference. Every variable used in scoring must connect to the construct and be evaluated for bias. A long completion time might indicate persistence, confusion, accessibility needs, or poor interface design. Without validation, telemetry can become pseudo-precision.

Validation should cover content alignment, response processes, internal structure, relation to other measures, and consequences of use. That means subject matter experts review realism and task relevance, candidates complete think-aloud studies or usability trials, psychometricians analyze reliability, and program leaders examine whether pass-fail decisions track meaningful outcomes. In employment testing, simulation scores should correlate with job performance indicators better than less representative measures when the construct warrants it. In licensure, standard setting often uses modified Angoff, bookmark, or performance panel methods tailored to the simulation tasks and scoring scale.

Technology platforms, delivery choices, and operational constraints

Simulation delivery ranges from low-tech tabletop exercises to immersive virtual reality. The correct platform depends on the target behavior, not on trend value. Web-based branching scenarios are effective for customer support, compliance, and many judgment tasks because they scale well and work on standard devices. Secure labs are common in information technology and data analysis because candidates need realistic tools such as terminal access, SQL environments, or observability dashboards. Manikin-based simulations remain central in nursing and emergency medicine because physical technique, team coordination, and rapid intervention are part of the construct. Virtual reality helps when spatial navigation, hazard recognition, or equipment interaction is essential, such as warehouse safety or industrial operations.

Operational requirements shape feasibility. High-stakes programs need identity verification, device checks, browser control where relevant, accessibility review, incident logging, and contingency planning for outages. Latency can distort performance in cloud labs. Audio quality can affect role-play ratings. Browser incompatibility can create unfair barriers. I have seen otherwise strong designs fail in launch because organizations underestimated support load and reset procedures. Candidates need practice environments, clear instructions, and a way to recover from technical faults without invalidating the score.

Accessibility deserves explicit attention. A simulation can be realistic and still exclude qualified people if the interface assumes unrestricted vision, hearing, fine motor control, or prior familiarity with a niche tool. Applying principles from the Web Content Accessibility Guidelines, providing keyboard navigation where possible, offering compatible screen reader structures, and distinguishing construct-relevant speed from arbitrary time pressure all improve defensibility. Accessibility is not a postproduction patch. It changes scenario design, interface choices, and scoring rules from the start.

Use cases across industries and common implementation mistakes

Simulation-based assessments now appear across nearly every sector. In healthcare, they evaluate clinical judgment, emergency response, sterile technique, and handoff communication. In aviation, recurrent simulator checks assess abnormal procedures, crew resource management, and adherence to standard operating procedures. In finance, analysts may complete trading or fraud-detection scenarios using live data feeds. In manufacturing, technicians troubleshoot equipment faults while following safety protocols. In software engineering, candidates work in containerized environments to debug code, review pull requests, or stabilize a failing service. In public safety, dispatch and command simulations test prioritization, communication, and incident management under pressure.

The most common implementation mistake is building the scenario before defining the evidence. Teams get excited about storylines, avatars, or immersive visuals and only later ask what exactly should be scored. The second mistake is overloading one simulation with too many competencies. A single emergency department scenario might try to measure clinical reasoning, empathy, teamwork, ethics, documentation, and resilience at once. That usually weakens reliability because the evidence for each claim becomes thin. A better design samples fewer constructs per task and uses multiple shorter scenarios. The third mistake is treating simulation outputs as self-evident. If a candidate failed, was it poor judgment, unfamiliar controls, reading load, cultural mismatch in prompts, or a network interruption? Without pilot data and review, interpretation stays fragile.

As the hub page for assessment formats within assessment design and development, this article points to the broader lesson: format choice is a validity decision. Simulation-based assessments are powerful because they reveal applied performance in context, often with richer evidence than static tests can produce. They are especially valuable when decisions carry risk and when real-world practice is too costly, dangerous, or inconsistent to observe directly. Used well, they improve alignment between what matters on the job and what gets measured. If you are refining an assessment strategy, audit your competencies, identify where context changes performance, and prioritize simulations where that added realism will produce better decisions.

Frequently Asked Questions

What is a simulation-based assessment?

A simulation-based assessment is a testing format that evaluates how a person performs in a realistic, job-relevant, or role-specific situation rather than measuring only what they can remember on a traditional written exam. Instead of asking someone to select the correct answer from a list, it places them in a controlled scenario where they must make decisions, apply knowledge, follow procedures, solve problems, and respond to changing conditions. The goal is to capture performance in action.

These assessments can take many forms. A healthcare learner may work through a virtual patient case and choose how to diagnose and treat symptoms. A cybersecurity candidate may respond to a simulated attack inside a lab environment. A pilot may complete a flight scenario that tests navigation, communication, and emergency handling. A customer service employee may move through a branching conversation with a frustrated client. In manufacturing or technical roles, a simulation may require troubleshooting equipment faults under time pressure.

What makes this approach valuable is that it measures applied competence. It can reveal whether someone can integrate technical knowledge, practical skill, judgment, and adaptability when conditions feel realistic. That is why simulation-based assessments have become a core option in assessment design and development, especially in fields where performance matters as much as, or more than, factual recall.

How are simulation-based assessments different from traditional tests?

The biggest difference is that traditional tests usually focus on recognition and recall, while simulation-based assessments focus on performance and decision-making. A multiple-choice exam can show whether a person knows a rule, definition, or best practice. A simulation can show whether that person can actually apply the rule at the right time, in the right sequence, and under realistic constraints.

Traditional tests are often highly efficient, easy to score, and useful for broad knowledge measurement. They work well when the goal is to check foundational understanding. Simulation-based assessments go further by introducing context. They can include incomplete information, competing priorities, dynamic consequences, and time-sensitive choices. This makes them better suited for evaluating procedural skill, critical thinking, communication, and judgment.

For example, it is one thing to ask a learner which action should be taken first in an emergency. It is another to place that learner in a realistic scenario where alarms are sounding, data is changing, and the correct response depends on interpreting multiple signals at once. In that setting, the assessment measures not only knowledge, but also situational awareness, prioritization, and execution. That added realism is exactly why simulation-based assessments are so useful when organizations need stronger evidence of real-world readiness.

What skills do simulation-based assessments measure best?

Simulation-based assessments are especially effective for measuring skills that depend on context, action, and judgment. They are well suited to evaluating decision-making, procedural competence, troubleshooting, communication, prioritization, adaptability, and problem-solving. They can also measure how well a person responds to unexpected changes, manages pressure, and recovers from errors.

This format is particularly valuable when success depends on doing the right thing in the right order. In a clinical setting, that may involve recognizing symptoms, ordering appropriate actions, and adjusting treatment based on patient response. In a technical setting, it may involve diagnosing system failures, interpreting logs or signals, and selecting an efficient resolution path. In customer-facing roles, it may involve listening carefully, choosing the right tone, de-escalating conflict, and balancing policy with empathy.

Because simulations can be designed around realistic tasks, they are also useful for measuring integrated performance rather than isolated abilities. A well-built scenario can capture how knowledge, process, behavior, and judgment work together. That gives educators, employers, credentialing bodies, and training teams a more complete picture of capability than a test that measures only memorization or abstract reasoning.

Why are simulation-based assessments considered more realistic and useful?

They are considered more realistic because they mirror the kinds of situations people actually face in education, training, and work. Real performance rarely happens in a vacuum. People must interpret information, make choices with consequences, follow procedures, communicate effectively, and adapt when circumstances change. Simulation-based assessments recreate those conditions in a structured and measurable way.

That realism matters because it strengthens the connection between assessment results and real-world performance. When someone succeeds in a thoughtfully designed simulation, the result can provide stronger evidence that they are prepared to perform outside the testing environment. This is especially important in high-stakes domains such as healthcare, aviation, cybersecurity, public safety, technical operations, and customer support, where performance errors can have serious consequences.

They are also useful because they support richer feedback. Instead of reporting only whether an answer was correct or incorrect, a simulation can show where a person hesitated, which path they chose, whether they followed the correct sequence, how efficiently they solved the problem, and how they responded when the situation became more complex. That level of detail makes simulation-based assessments valuable not only for selection and certification, but also for learning, coaching, and continuous improvement.

What makes a simulation-based assessment effective and trustworthy?

An effective and trustworthy simulation-based assessment begins with strong design. The scenario should reflect meaningful real-world tasks and align clearly with the skills or competencies being measured. Every decision point, action, prompt, and consequence should serve a purpose. If the simulation includes tasks that are unrealistic or unrelated to the intended outcomes, the assessment may feel engaging but fail to measure what actually matters.

Quality also depends on standardization and scoring. Even though simulations may feel dynamic, they still need clear rules, consistent administration, and defensible evaluation criteria. Scoring models should be based on observable performance, such as accuracy, sequence, timing, prioritization, safety, efficiency, or quality of judgment. In many cases, assessment designers use rubrics, automated scoring logic, expert review, or a combination of methods to ensure results are fair and reliable.

Finally, trustworthiness comes from evidence. Developers should test whether the simulation works as intended, whether users understand the tasks, whether scores are consistent, and whether results meaningfully relate to actual performance. Accessibility, usability, technical stability, and bias review are also essential. When those elements are handled well, simulation-based assessments become far more than interactive exercises. They become credible tools for evaluating what people can truly do in realistic situations.